Digital, Virtual, and Blended Learning Explained: Differences, Design Principles, and Use Cases

As digital learning becomes embedded across education systems, it’s as important as ever for educators and instructional leads, edtech teams, funders and funding seekers to understand the nuances of core digital learning designs, solutions, and approaches, and how these interface with a larger framework of instructional best practices.

Keep reading for nuanced insights into the ways “digital,” “blended,” and “virtual” learning are used, how these approaches and designs overlap, and what kinds of distinct institutional challenges and practices each of these approaches emphasizes. We’ll also breakdown how these insights guide specific education sector actors — from edtech design teams, to educators, and institutions or companies pursuing grant funding.

In the realm of instructional practices, educators need to be wary of pinning their hopes on mere replication — what’s “proven” to work in one setting, may not get the same results in other settings. This makes it essential to understand how distinct approaches to digital learning are likely, or not, to complement the instructional best practices and formats in the setting you want to support.

B

Billions are spent annually on edtech that is some combination of underused, inequitably used, and ineffectively used.

— InnovateEDU

Introduction

Notice that InnovateEDU researchers didn’t say billions are spent annually on ineffective edtech solutions. Product efficacy (or lack of efficacy) is not the highlight — it’s the problem of integration, implementation, larger best practices, and change leadership that are under scrutiny.

Despite this gap in innovation practice, digital learning now shapes how instruction is designed, delivered, evaluated, and funded and edtech designs and the the promises and opportunities these tools, now AI-enhanced, offer are both evolving at a pace that efficacy studies cannot keep up with. According to recent reporting, “K-12 education technology spending alone reached $30 billion in 2024, and it’s projected to nearly double by 2033.”

Yet across education sector roles and contexts, terms like digital, virtual, blended, and connected are still often used interchangeably, despite distinct design implications and the challenges they’re best aligned to solve.

As a result, education leaders, product teams, and grant writers sometimes look for deeper understanding into what these models actually entail and what best practices look like across these formats — insights useful for design, adoption, funding efforts, and implementation planning.

This post offers both foundational and nuanced insights into

the major digital learning formats in use today and their distinct characteristics and common use cases

which enduring instructional practices and universal learning principles digital formats need to incorporate to ensure reliable designs responsive to standards-based instruction, learner variability, and the learner experience

actual case studies of the ways different institutions deploy specific digital formats — with tailored agility and creativity — for innovation that helps overcome a variety of sticky challenges to student access and success

For edtech teams, district and institutional leaders, nonprofits, and grant seekers alike, it’s crucial to look beyond isolated edtech tools and features and develop expertise in how to deploy digital learning formats in ways that are most responsive to each institution’s localized culture, challenges, and goals while integrating coherent instructional principles within edtech tools and within the larger instructional designs they’re supporting — not only for improving learning outcomes, but for building trust, demonstrating readiness, and aligning digital learning with prevailing research-based expectations.

Questions we’ll answer:

How do common digital and blended learning models differ in structure and purpose?

What are the key differences between digital, virtual, and blended learning that matter most for design and evaluation?

Which effective instructional practices should be integrated thoughtfully across these formats?

Which designs ensure that connected classrooms and device-assisted instruction support knowledge transfer, critical thinking skills, and student agency, collaboration, and inquiry?

Why do coherence, accessibility, and learning-science-alignment endure as pillars of good practice even as tools and platforms evolve?

This article also builds directly on key insights we shared in other posts about cognitive science and instructional design — calling attention to the ways the science of learning informs digital learning environments.

Contents:

I. Defining the Landscape: Digital, Virtual, and Blended Learning

II. Core Digital and Blended Learning Models

III. Design Principles That Transfer Across Modalities

IV. Learner Experience Design

V. Artificial Intelligence in Digital Learning

VI. Common Implementation Challenges

VII. Case Studies: Matching Approaches to Challenges

VIII. Implications for Leaders, Designers, and Funders

IX. Conclusion

I. Defining the Landscape: Digital, Virtual, and Blended Learning

Why distinctions matter for design, evaluation, and funding alignment

In education, terminology guides practice, so shared understandings are crucial. This is especially true in digital learning, where similar tools can be used in fundamentally different learning formats, with diverse learning populations, and in diverse professional cultures even while funders, grant reviewers, and school system decision-makers may not always attach the exact same understanding to the same concepts or terminology.

At the same time, how educators craft and combine digital learning formats will shape how responsive an approach is to key learning challenges, system challenges, and system metrics:

how instructional time is structured

how student learning is supported and assessed

how equity and access are addressed

how programs align with federal and state guidance

how proposals and products signal seriousness and rigor

➔ For funding intiatives a clear understanding of digital learning modalities and applications can help organizations strengthen alignment with federal and state guidance.

➔ For organizations or edtech firms seeking to improve studet learning or professional learning, nuanced insights into how to carry out the integration, and alignment of edtech tools alongside core instructional designs, use-cases, and research-based insights reflects a higher level of expertise, a foundation for more successful adoption, and the potential for better results and outcomes for learners.

A. Digital learning: the basics

Digital learning refers broadly to instruction that uses digital tools or environments to support learning. It can occur:

in physical classrooms, online spaces, or hybrid settings

synchronously, asynchronously, or through a combination of both

as the primary instructional environment, or as a targeted support (practice, feedback, creation, collaboration)

A digital learning ecosystem typically incorporates:

broad access to and use of personal digital devices, such as laptops or iPads for example

educational software and online learning platforms

internet access

cloud-based communication and collaboration tools

navigations dashboards that help students follow the right learning sequence or access different tools or support resources

communication dashboards that help educators coordinate communications, manage feedback, track student progress

data dashboards that help students track their learning and help teachers more efficiently retrieve actionable data for assessments, instructional planning, and grading

Digital learning includes a wide range of modalities and formats and implementation is a complex, iterative process.

Technology in education is not a silver bullet, but it is the bow that can launch many arrows.

— Audrey Watters, Education Technology Journalist & Blogger (hackeducation.com)

Digital learning can mean everything from streamlining teacher workflow to scaling access to key learning content, or helping students explore and build virtual 21st-century workplace skills.

Since digital learning has such an expansive scope, thoughtful designs that use, adopt, or integrate digital tools will usually do so within the framework of more universal best practices and designs. Simply pairing students with devices or adopting digital formats in a rote, prescriptive fashion will not be a strong approach.

Flawed deployment and unfruitful change efforts will often lead, in turn, to a blame game that lowers morale and makes stakeholders more skeptical when asked to embrace a new round of instructional innovation.

Education policy, education institutions, and even teachers themselves have often been the target of blame, but in this moment technology itself is becoming the scapegoat.

A rising chorus of criticism from real or pseudo researchers and from public officials is beginning to blame, indiscriminately, the broad education technology sector for current lapses in student learning outcomes. In this moment, the ubiquity of devices, digital platforms, and online media outlets, are all creating an ecosystem that distracts students from enduring learning. In the education market, this is making “digital and AI disruption” the sources of the backlash against them — as digital tools and a vaguely defined notion of “screen time” are becoming the scapegoat for both real and perceived learning loss.

🔑 A rising chorus of criticism from real or pseudo researchers and from public officials is beginning to blame, indiscriminately, the broad education technology sector for current lapses in student learning outcomes. The ubiquity of devices, of digital platforms, and of online media outlets, are all creating an ecosystem that distracts students from enduring learning and from deepening literacy skills — making “digital and AI disruption” the source of growing public skepticism and distrust. The real culprit is almost for sure a larger framework of complexity — the same kinds of dynamics that have made it hard for schools, for many decades, to instutitute and sustain agile and reliable approaches to instructional innovation.

Stepping back, we can see of course that there are many digital approaches and edtech tools driving rich learning environments, increasing learner access, or boosting and streamlining the work of instructional planning and assessment.

It’s also clear that the learning losses that correlate with digital diffusion are not anchored in edtech deployment as such, but in longstanding challenges to transforming professional culture within school settings, and, in the current moment, in the ways these complexities show up in studies highlighting the ineffective uses made of edtech solutions — the same kinds of complex challenges and dynamics hindering instructional innovation that have made it hard for schools, for many decades, to institute and sustain agile and reliable approaches to systemic professional learning and instructional design in the midst of ever-accelerating changes in the knowledge, technology, and workplace landscapes.

This framework of insights, failures, and challenges implies the need for

a systematic, agile, and iterative approach to deploying digital learning formats

stronger bridges between educators and edtech designers for shared insights

anchoring change models in core (and enduring) principles of instructional design and learning science

Transformation is about more than technology. The goal is to enable a complete re-imagining of

learning, anytime, anywhere, and to be ruthless in studying and adjusting when and how

technology makes things better.

— Bruce Dixon, Anywhere Anytime Learning Foundation (quoted in Transforming Education, Microsoft Education, 2018)

B. Virtual learning: flexible teaching and learning across time and distance

Virtual learning refers to instruction transmitted digitally. While it can conjure up a vaguely defined use of online learning tools, the emphasis is typically on using a remote learning approach to enhance access to participation for more learners, or for learners or institutions seeking to overcome geographical distances or scheduling conflicts.

Common examples of the fully remote models include:

virtual schools

online credit recovery programs

fully online higher-education or professional programs

Virtual learning has exceptional capacity for flexible programming and broad access, particularly for learners constrained by geography, schedules, or life circumstances.

Virtual learning designs — in light of the lack of “face-time” between educators and students — should place significant weight on clarity of expectations, feedback systems, and learner support structures. This will help ensure fully remote learners benefit from coherent and accessible onboarding and learning experiences in the absence of in-person and synchronous interactions with educators. In short, virtual learning tends to be about offering increased access to enrollment, but that doesn’t necessarily include a high level of access to learning and curriculum resources — factors which will vary across virtual learning programs just as they vary across all educational offerings.

Beyond distance learning…

While virtual learning most commonly refers to fully remote instruction (also called distance learning in some contexts), that’s not the only approach. A virtual component or remote learning strategy can also supplement a larger instructional design, including designs under the umbrella of in-person learning — such as flipped classroom formats, for example (more on flipped classrooms below).

Synchronous vs. Asynchronous Modalities Can Shape the Virtual Learning Experience

synchronous — learners joining instructors in real time although remotely

asynchronous — learners accessing learning materials and submitting assignments online at times and locations that suit them

Understanding the key opportunities that virtual learning formats offer as well as the constraints that can challenge learners or impede the learning process has important implications for designing digital learning tools and platforms to be deployed in these kinds of programs and, by extension, for leaders positioning an institution or nonprofit for funding, accreditation, or growth and expansion.

C. Blended learning: integrating in-person and digital instruction

Blended learning combines or “blends” in-person and digital instruction in an intentional way. At its best, it isn’t a rote back-and-forth between modalities but a purposeful design that seeks to take advantage of specific benefits that in-person vs. digital formats offer. This approach creates an overarching framework for making decisions about:

how instructional time is used

how teachers and students interact

how practice, feedback, and collaboration are distributed

For example, many blended learning models exploit digital tools with the aim of making better use of in-person instructional time. In this approach:

in-person time is dedicated to high-value interactions — such as dialogue, discussion, one-on-one interactions, modeling, live question & answer formats, synchronous collaboration…

digital/remote learning time is used for more passive or autonomous activities — such as listening to lectures, watching slideshows, viewing informational videos, or doing purely independent practice...

The goal is a coherent instructional experience rather than a collection of disconnected tools, with a format that uses digital tools as a way to make the best use of in-person instructional time.

However, at risk of stating the obvious, as AI interfaces and capabilities become more sophisticated, more safe and reliable, more nuanced and responsive, and “more human,” we have to imagine that the dichotomy between in-person instruction vs. remote digital learning — and their respective use cases and limitations — will quickly fade from relevance!

🔑 The distinctions that tend to be at the core of “blended learning” — a distinction between active and responsive modes of in-person learning vs. more passive modes of digital or remote learning — may fade from relevance as AI interfaces and capabilities become more sophisticated, safe… and more human.

Digital learning — how is success measured?

It probably goes without saying that for both digital and conventional formats, the overarching goals will be similar:

to accelerate, improve, or scale the learning of critical academic objectives

to pursue a “whole student” approach to learning that fosters social learning skills, self-efficacy, and character growth alongside the assimilation of subject-matter knowledge and skills

to ensure that key design features make instructional formats and learning experiences responsive to local needs and goals

This means, even when emphasizing innovation and transitions to digital learning, education leaders should remember this key design principle:

Learners should be at the center of what happens in the classroom — with activities focused on their cognition, learning process, and growth (“7 Principles for Learning,” Organization of Economic Cooperation and Development, 2010).

In some contexts, however, interests in pursuing “digital” or “virtual” designs for example, may be centered less on instructional efficacy as such, and more on other metrics — such as scaling an instructional resource, streamlining administraive tasks (timesaving), or increasing enrollment access.

This means talking about design and specific models and how one measures their impact and their efficacy depends on these kinds of crucial distinctions and underlying objectives. That said, at some juncture, educators or edtech teams will also have to consider and address how specific solutions and designs will also impact — support, align with, or constrain — the learning experience, the existing learning and teaching culture and practices, and the ultimate learning objectives.

II. Core Digital and Blended Learning Models: Not Prescriptions or Turnkey Solutions — But Proven Design Templates

Digital and blended learning models should, like other instructional formats, put tested instructional practices to work and integrate them effectively. Across the models, distinguishing factors of strong design include:

effective pacing and coherent structure and sequencing

student autonomy and support

teacher roles

assessment and feedback

emphasis on the most relevant learning objectives

No single digital learning format is universally “best.” What matters is understanding how a given model is better adapted to specific kinds of needs, goals, or challenges.

Common designs supported by digital, virtual, or blended approaches

Station Rotation

Students rotate among stations that may include teacher-led instruction, collaborative work, and independent digital practice. This model works best when rotation time is used for targeted instruction and feedback. A default to low-value or disconnected tasks is likely to slow learner progress.

Lab Rotation

Students rotate into a lab or dedicated space for digital learning. This approach works best when digital activities are tightly aligned with core curriculum and assessment, and is less effective when lab experiences become detached from classroom instruction.

Flipped Classroom

Direct instruction is shifted an asynchronous (independent) learning format, such as a recorded lecture or demonstration, while in-person time is used for application and guided practice. This model works best when access and expectations are clear; it can break down when in-class time collapses into reteaching what was covered in the asynchronous session.

Flex Model

Digital learning serves as the primary instructional mode, with teachers facilitating targeted support. This approach requires explicit routines, scaffolds, and support structures, and requires careful effort to anticipating just how much support learners truly need for different succeeding with this format — not overrelying on the learner’s independence and agency.

Enriched Virtual

Students complete most learning online, with periodic in-person sessions. This model works best when face-to-face time is intentionally designed for targeted interaction, support, and feedback rather than treated as a mere informal or rote check in.

Illustrative vignette — blended learning supports a range of instructional formats

At a middle school implementing a blended learning, different teams use different models based on an overarching instructional purpose.

In sixth-grade math, teachers use a station rotation model so the instructor can pull small groups for targeted feedback while other students work on focused digital practice.

In eighth-grade science, a flipped classroom approach shifts content review outside of class, freeing in-person time for labs and discussion.

A flex model is adopted to support a small group of students following for an alternative pathway, with students completing much of their work digitally and supported by structured check-ins and coaching from teachers.

Across classrooms, the digital resources and approaches are similar, but the learning experience and format differs — because each model is adopted and adapted, as needed, to support specific goals, routines, and student needs.

B. Connected classrooms

Educators sometimes think of “connected classrooms” as simply describing infrastructure, such as video-enabled instruction across sites. This makes sense intuitively, but In practice, a connected classroom approach typically assumes some recognized, targeted modalities for enhancing learning, such as:

networked learning across locations — enhancing access to expertise and shared experiences

device-assisted instruction within classrooms — affording more opportunities to foster agency, collaboration, inquiry, and creativity

Cross-site connections

When whole sites and/or districts have sufficient cross-connectivity, there are also opportunities to combine cross-site learning with intentional in-class learning activities.

Classrooms may include synchronous shared instruction across schools or campuses, as well as asynchronous collaboration through shared workspaces, artifacts, and discussion threads.

Common applications include:

expanding access to advanced coursework in rural or under-enrolled schools

supporting continuity in alternative or continuation programs

offering specialized electives and pathways without duplicating staffing

From a funding and policy perspective, these models often align with priorities related to equity, access, and course availability.

Distributed expertise

Rather than centralizing instruction, connected classrooms can distribute expertise, such as:

specialized instructors serving multiple sites

external experts embedded into projects or units

allowing for shared instructional responsibility without isolating learners

Clearly, both cross-site features and distributed expertise can also be leveraged to create opportunities and benefits for professional learning as well as student learning.

What makes for effective use across these approaches is ensuring instruction remains coherent, accessible, and relational — so students and professionals experience connected learning that feels highly relevant, engaging, and authentic.

Device-assisted instruction within classrooms

Connected classrooms also reshape in-person learning by integrating student devices into everyday instruction.

1. When intentionally designed, device-assisted instruction can support student agency and flexible learning pathways through:

independent, small-group, and whole-class learning

flexible pacing within shared learning goals

differentiated support that doesn’t fragment the curriculum

These capabilities align naturally with Universal Design for Learning — making differentiated approaches easier to offer while ensuring engaging content and more opportunities for affording students greater voice and agency in how they demonstrate what they’ve learned.

2. Devices can also play an integral role in enabling and enriching project-based, inquiry-based, and constructionist learning, providing tools for:

research and inquiry

drafting, modeling, and iteration

collaboration and feedback

When paired with clear learning goals and scaffolding, these device-assisted formats use structure to eliminate distraction and dilution of focus and in order to support flexible learning, active processing, access to diverse learning resources, and help educators find more opportunities to enhance student voice and agency and make learning feel more authentic.

C. Building collaboration and communication skills

Digital and blended environments also support instruction in 21st-century collaboration and communication skills, with structured, streamlined features that facilitate:

collaborative problem-solving

multimodal communication

peer critique and revision

It’s important to remember, however, that these outcomes depend on task design, norms, and facilitation, not simply on access to devices:

articulating clear learning goals (backwards design)

layering in adaptive and targeted scaffolding (rather than open-ended exploration alone)

including explicit instruction in relevant soft skills and norms (21st-century skills)

providing supportive in-person facilitation as needed (teacher-as-coach model)

Common misconceptions to keep in view…

Devices do not automatically produce productive engagement.

Connectivity and access as such do not on their own ensure instructional quality.

Group work does not guarantee constructive participation and active collaboration.

The central design question is always: How does technology reorganize learning activity in service of clear instructional goals and a meaningful learner experience and effort?

Illustrative vignette

A small rural high school uses a connected classroom model to offer advanced science coursework.

Students participate in synchronous seminars led by a specialist serving multiple sites.

Locally, students collaborate in small groups or teams using devices to analyze data and develop hypotheses, while an on-site teacher facilitates discussion and supports continuity.

Between sessions, students collaborate asynchronously with peers at other sites on shared project artifacts.

This approach expands access while sustaining crucial local relationships and collaborations, embeds agency in task design, and maintains instructional coherence across classrooms and schools.

III. Design Principles That Transfer Across Modalities

Although digital learning formats vary widely, effective systems tend to rely on a shared set of design priorities that hold across formats and contexts.

Whether instruction takes place fully online, in blended classrooms, or across connected sites, certain priorities — evidence-based practices and designs — recur across research, federal guidance, and documented implementation efforts. These best practices should be supported by digital learning formats, not supplanted by them.

In other words, digital, virtual, and blended learning principles are not platform-specific tactics or trend-driven models; they are in fact effective design templates that offer recognized, coherent, and tested approaches for helping educators implement instructional designs with greater flexibility and efficacy and/or greater consistency, reach, and universal access.

🔑 Digital, virtual, and blended learning principles are not platform-specific tactics or trend-driven models but are design templates that can readily support evidence-based practices and designs that recur across research studies, federal guidance, and documented implementation efforts.

A. Universal design for learning

Universal Design for Learning (UDL) functions most effectively as a baseline design orientation rather than a checklist of accommodations.

Leaders should understand that UDL encompasses a shift from earlier notions of “universal access” (which focused primarily on removing barriers after the fact).

UDL starts from the premise that learners bring different aptitudes, experiences, and challenges — and discourages an approach where design features or benchmarks are based on an abstract notion of an “average learner” profile.

Learner variability is not something to adapt to when it’s observed in specific settings but a universal dynamic of all learning settings. The emphasis is on proactive designs that anticipate this intrinsic variability.

As a result, instructional approaches should reduce unnecessary barriers while providing structured flexibility that support the learning experience and learning success of individual learners as such — all while anticipating any number of overlapping experiential or “individual” factors impacting learning, instead of applying assumptions about specific kinds of learner profiles.

This framing aligns closely with guidance from organizations such as CAST, as well as with federal priorities related to equity, accessibility, and inclusive design.

These principles may imply some minor points of friction with the emphasis cognitive research findings place on the essentially universal features of brain processing, across diverse individuals, although the two approaches are largely complementary.

What is Cast?

CAST is a nonprofit research and development organization that works to expand learning opportunities for all individuals through Universal Design for Learning.

Founded in 1984 as the Center for Applied Special Technology, CAST has earned international recognition for its innovative contributions to educational products, classroom practices, and policies. Its staff includes specialists in education research and policy, neuropsychology, clinical/school psychology, technology, engineering, curriculum development, K-12 professional development, and more.

In digital learning contexts, UDL emphasizes reducing unnecessary barriers and providing flexibility and adaptability:

clear instructions and expectations

consistent navigation and routines

accessible formats and interfaces

multiple ways to engage with content

multiple ways to demonstrate understanding

options that support pacing, scaffolding, and learner agency

When applied well, UDL does not imply unlimited choice or unstructured personalization, but is a broad qualitative pillar of design intended to ensure that instructional tools boost engagement, enhance instructional coherence in each moment for each learner, and make it easier for all students to progress through the same curricula.

For product teams, program designers, and grant writers, this distinction can be important: referencing and applying UDL across efforts to reduce barriers to participation and build student agency will be more compelling when implemented as a design foundation and constant — creatively pursued throughout the design process to conform at a high level of efficacy and alignment — not simply resorting to a rote integration of UDL mandates or relying on vague claims about features that support free-ranging, idiosyncratic forms of “personalization” or “customization.”

🔑 UDL does not imply unlimited choice or unstructured personalization, but is a broad qualitative pillar of design intended to ensure that instructional tools boost engagement, enhance instructional coherence in each moment for each learner, and make it easier for all students to progress through the same curricula. Applying and referencing UDL imaginatively and robustly will be more compelling and better aligned with research-based expectations than vague claims of “personalization” or “customization.”

B. ESSA benchmarks for evidence-based reliability

Another important frame of reference for evidence-based design is outlined by the federal Every Student Succeeds Act (ESSA). Under ESSA guidance, district and school leaders are encouraged to seek out tools and interventions that have been rigorously evaluated and have correlated with strong learning outcomes.

ESSA’s FOUR TIERS & FIVE FACTORS

Education programs and interventions are commonly ranked into one of four evidence tiers, ranging from Strong (Tier I) to Emerging (Tier IV) — as indicators of their promise, reliable design features, and documented performance.

The five factors that inform evidence ratings are:

study design

study results

findings from related studies

sample size and setting

match (how the student sample and setting that where performance was measured overlap with the target setting)

While ESSA does not prescribe specific instructional models, federal funding agencies will typically favor adoption choices backed by established research and evidence of effective performance in similar settings, alongside strategies for monitoring and continuous improvement.

Digital learning initiatives that reflect principles such as UDL (Universal Design for Learning), alignment with the science of learning, and instructional coherence are often easier to position within ESSA’s evidence framework — particularly when supported by research citations, implementation logic, or local evaluation plans.

ESSA Caveats…

While it’s crucial to understand this framework and the expectations it can create for pursuing federal grants, for edtech product research and development, and for education leaders who are vetting them, it’s also important to remember that ESSA is based on assumptions about “outcome replication” that may not be reliable or realistic across diverse educational settings and instructional contexts. Furthermore, as the pace of innovation accelerates exponentially in the AI age, this kind of cumbersome study-driven approach may fail to keep pace with accelerating edtech iterations and innovations.

🔑 While it’s crucial to understand this framework and the expectations it can create for pursuing federal grants, for edtech product research and development, and for education leaders who are vetting them, it’s hard to see how this study-driven approach will ever keep pace with accelerating edtech iterations and innovations.

C. Alignment with the science of learning

Digital formats do not change how the human brain processes information, so effective digital instruction should align with insights from cognitive research.

These principles remain relevant in digital environments, regardless of whether learning occurs online, in person, or in blended formats.

What science of learning alignment looks like:

→ Managing cognitive load

minimizing extraneous interface noise

sequencing information deliberately

limiting the number of genuinely new ideas introduced at once

→ Supporting durable learning

embedding retrieval practice throughout instruction (the testing effect)

revisiting key ideas over time (spaced practice)

encouraging learners to explain, apply, and organize knowledge (higher order thinking and building mental schema)

→ Distinguishing engagement from cognition

recognizing that activity (clicking, watching, producing) is not synonymous with learning

blending knowledge acquisition with student voice, choice, and agency while leveraging higher order thinking tasks in order to help students assimilate concepts more deeply and become more independent learners

What interferes with science of learning alignment:

poorly designed interfaces

excessive media elements

unclear task sequencing or constant context-switching (which can quickly overwhelm working memory)

When edtech products and digital learning designs do align with proven learning principles, there’s far greater promise for more reliable consistency and outcomes across diverse settings and when scaling up within a given setting.

D. Instructional coherence

Instructional coherence reflects how well curriculum, instruction, assessment, and professional learning operate as an integrated system.

In digital contexts, coherence is often threatened by:

uneven implementation

disconnected instructional choices

lack of explicit and transparent learning objectives

poor alignment between learning activities and modalities and learning objectives

tool sprawl

Effective systems ensure coherent and targeted learning through:

alignment with well-defined and enduring learning goals

consistent instructional routines

coordinated assessment practices

collaborative planning

data-informed refinements of instructional targets and practices

Technology deployments support learning most effectively when guided by structures that ensure clarity, continuity, scale, efficiency, reach, and flexibility, as opposed to piecemeal adoptions of stand-alone solutions.

Core pillars of instructional coherence:

Standards and goal alignment — Ensures learning progresses in a logical, cumulative sequence

Consistent instructional routines — Reduces cognitive load and supports learner confidence

Coordinated assessment — Produces usable evidence of learning progress and gaps during the learning cycle (targeted formative assessment) along with direct evidence of relevant learning outcomes (summative assessment)

Collaborative planning — Prevents curriculum drift across teachers, courses, or sites

Data-informed refinement — Enables adjustment based on evidence, not intuition

➝ For districts and institutions, this often means prioritizing fewer, better-aligned tools over expansive but loosely integrated ecosystems.

➝ For edtech providers, it means making design decisions informed by real insights into key instructional system challenges and reliable pedagogical practices.

From a funding or purchasing evaluation standpoint, coherence is increasingly visible in the decision making process. Reviewers and decision makers are more likely to trust initiatives that clearly articulate how digital components align with curriculum goals, assessment strategies, and educator practice.

🔑 As with other instructional reforms and approaches, digital learning initiatives with strong alignment — to foundational instructional practices, strategic assessment processes, and effective professional practices — will demonstrate greater credibility.

Why integrating and aligning these principles matters

Universal Design, learning science, and instructional coherence are not independent concerns but reinforce one another:

UDL reduces barriers and supports agency, making cognitive effort more productive.

Learning science principles ensure that effort is directed toward durable understanding rather than surface activity.

Instructional coherence ensures that these practices scale across classrooms, courses and course sequencing, and different school settings with less fragmentation.

Together, these principles provide a shared, authoritative, and reliable design language — one that helps educators, designers, and funders move beyond mere adoption, to move more confidently toward implementing digital learning systems that are defensible, reliable, and more enduring, adaptable, and scalable.

IV. Learner Experience Design

Even when digital learning is aligned with strong instructional principles, how learners actually experience instructional interactions will impact outcomes. Learner Experience Design (LXD) focuses on this human layer: how clarity, usability, feedback, and emotional cues influence learners’ willingness and ability to engage in meaningful cognitive effort.

This means user experience matters a lot in digital environments, where learners must navigate interfaces, manage attention, and self-regulate more actively.

Even if design teams can hire experts trained in end-user design, this design component has many overlapping layers in the education context, and there is no simple, one-dimensional end-user profile or psychology dynamic to apply.

Even small friction points, such as

confusing navigation,

unclear expectations, or

inconsistent routines,

can quietly drain cognitive resources or open doors to distraction, undermining productive engagement and meaningful progress.

Principles of Effective LXD

Human-Centeredness

Designers ground decisions in an understanding of learners’ needs, constraints, and contexts, often informed by user research, observation, or learner personas. Here, design teams should be working with frontline insights into relevant kinds of learners and settings, in addition to technical design guidelines.Contextual Awareness

Learners don’t learn robotically but benefit from understanding why they are learning a skill and how it connects to real-world goals, academic pathways, or professional applications.Active Learning

Effective LXD prioritizes interaction over passive consumption. Discussion, collaboration, problem-solving, simulation, and metacognition typically offer much deeper learning support and facilitate lasting learner engagement vs. static content delivery alone.Just-Right Difficulty

Drawing from the “moderate discrepancy hypothesis,” LXD aims to optimize learning challenge levels — avoiding tasks and delivery modes that are so simple they disengage learners or so difficult they overwhelm them.Personalized Feedback

Digital tools can support timely, targeted, and actionable feedback that helps learners understand gaps between current performance and desired outcomes, strengthening learning, helping ensure better learner self-regulation, and helping learners build confidence and self-efficacy for the next leg in their journey.

Applying the principles

Across effective digital and blended learning systems, several experience-oriented best practices can guide effective decision making, planning, and iterations:

Clarity before complexity

predictable structure and navigation

clear “what to do next” cues

consistent instructional routines

Visible instructor presence

Learners benefit when instructors:

communicate expectations clearly

provide timely, actionable feedback

consistently and actively monitor and support student progress

Instructor presence can be conveyed through multiple channels — feedback, announcements, discussion facilitation, etc. — but with a relevant purpose and objective and consistent format, in order to reinforce accountability, belonging, and trust.

Productive challenge, not friction

Effective learner experience design distinguishes between:

productive difficulty — that supports learning (e.g., retrieval, problem-solving, reflection)

vs.

unproductive friction — that drains effort without much productive learning (e.g., confusing interfaces, unnecessary steps).

Making learner experiences as seamless as possible: systems, infrastructure, and sustainability

Platforms and tools must not only function well independently, but also work coherently together.

In his book Stratosphere — on exploring digital classrooms — education innovator and systems reform leader Michael Fullan emphasized many years ago why digital learning needs to be as seamless as possible to ensure the integrity of the learning process for both learners and instructors.

Achieving a seamless experience depends on many interconnected components working together without fragmentation and incompatabilities that interrupt instructional flow, undermine confidence in the learning design, and tax the patience of both teachers and learners simultaneously.

Success requires a thorough and holistic approach to planning and resourcing — so important given that so many roll outs have been plagued by interface, infrastructure, and software glitches of all varieties — resulting in frustration, downtime, and inefficiency, at the same time that the proliferation of screens and internet access have increased the likelihood of additional distractions and learning loss.

Robust planning, integration, and implementation are therefore essential.

Digital tools, supporting infrastructure, and instructional workflows must all receive appropriate attention: for compatibility, integration, and from the perspective of the learner experience (UDL, LDX…).

Breakdowns in any single component can undermine the entire learning initiative.

As digital tools become more prevalent — and especially as initiatives scale — digital infrastructure needs sustained support across all components:

visible and invisible hardware

instructor competencies

instructional routines

access to peripherals

financing for upgrades and replacements

Teaching foundational skills and norms widely

Explicit instruction is also needed to ensure more consistent student readiness. Just as students historically received explicit instruction in how to use pencils, organize binders, or take notes effectively, students need to develop requisite digital skills and norms that help ensure safe and positive social learning across digital spaces and interactions, and more seamless navigation of digital environments and worklows.

Key questions for feasibility and sustainability planning include:

Are funding sources reliable enough to maintain the full set of tools and infrastructure required?

Do systems reduce complexity for educators, supporting efficiency rather than adding workload and stress?

Are implementation structures established in advance and refined iteratively over time?

Thoughtful attention to broad system inputs means vendors and educators partnering to ensure that digital learning formats operate in a the most seamless ecosystem possible — across infrastructure, logistics, client and system support, and shared, explicit, and active norms for productive use and collaboration.

Why LXD matters for credibility and scale

For education leaders, edtech teams, and grant reviewers, learner experience is often the first visible signal of design quality.

Systems that feel coherent, purposeful, and supportive are ones that communicate seriousness and readiness — while fragmented or confusing designs raise immediate questions about sustainability and impact.

With intuitive interfaces and seamless digital ecosystems, strong LXD practices help ensure that the already complex and challenging process of large-scale learning feel more predictable, reliable, and as frictionless as possible.

🔑 LXD turns good intentions into real strengths — ensuring that sound instructional principles are experienced by learners (and their instructors) as usable, rewarding, and coherent.

V. Artificial Intelligence in Digital Learning: Opportunities, Concerns, and Guardrails

Artificial intelligence is now a visible and rapidly expanding feature of digital learning environments.

Here are the kinds of questions swirling around as the disruption unfolds:

How do we categorize AI products and desigs?

AI is itself a capability that can amplify or transform pre-AI designs, as a kind of more powerful operating system; AI can also be a central feature or capability of new edtech designs, while also speeding up the pace of design innovation, creating an innovation horizon that is in constant flux and retreat

Beyond How can AI help teachers? many education leaders are asking Under what conditions and in what applications will AI meaningfully support learning?

Recent research and field reports about AI-assisted instruction reflect a nuanced reality of conflicting sentiments, with mistrust, caution, and skepticism understandably clouding the landscape…

In fact, just as AI is emerging in the education space and raising concerns about that its entirely supplanting cognitive effort and undermining literacy and critical thinking development, digital learning is also facing a considerable backlash over concerns about poorly designed “screen time use cases” exacerbating overall learning loss. While this narrative lacks nuance (about ways digital tools can support and scale learning with valid designs), the ubiquity and open access that young learners have to the internet and social media platforms and “gaming” formats (including for so-called learning) are threatening to tip the balance in the larger narrative.

Amid this volatile mix of interest, anticipation, and skepticism and distrust, it is important for both edtech leaders and innovation-minded educators to work together to set the right standards and pursue the best designs:

for harnessing the promise and full potential that digital tools offer

for truly focusing on the learning mission (vs. flashy bells and whistles and mere product proliferation and sales growth)

for looking at design questions holistically and grounding them within a larger framework of shifting inquiry — inquiry about learning objectives (what do today’sstudents need to learn?) and about instructional delivery (what possibilities are emerging for rethinking instructional processes and formats in light of emerging tools and capabilities?)

The fact is, we are already seeing that the fortunes of digital learning solutions, justifiably or not, may be tied, in the near term, to the success or failure of today’s generation of learners and educators. This means success requires more than making a quick sale or quick new bell or whistle — it will likely rely on more education innovators applying shared insights with a commitment to a shared mission- and purpose-driven focus.

🔑 The fortunes of digital learning solutions, justifiably or not, may be tied, in the near term, to the success or failure of today’s generation of learners and educators. This means success requires more than making a quick sale or quick new bell or whistle — it requires all education innovators to commit to applying shared insights with a holistic mission- and purpose-driven focus.

Rising optimism but lingering concerns…

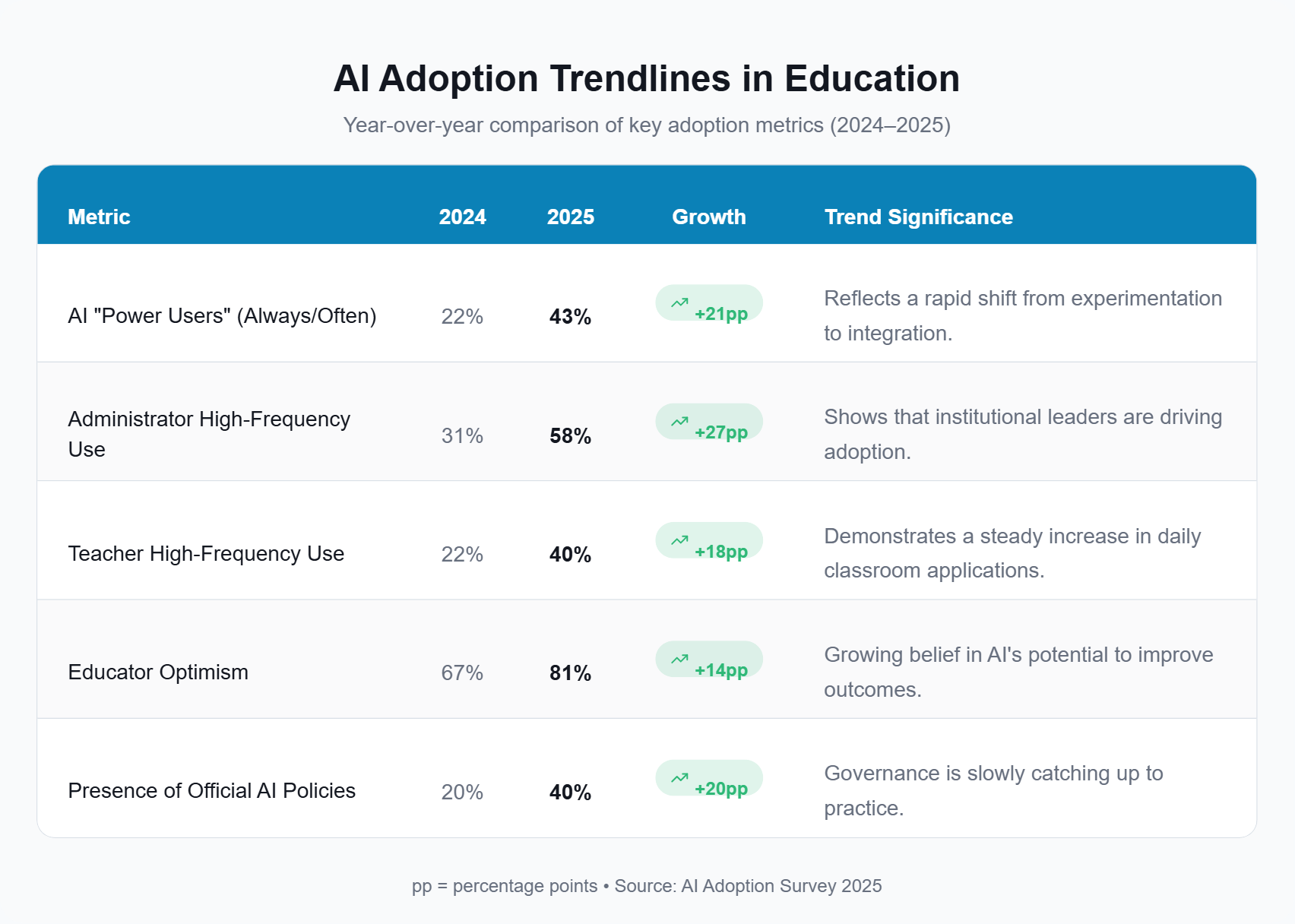

Recent national surveys of K–12 and higher-education educators from researchers at Carnegie Learning, Michigan Virtual, EDUCAUSE, and Microsoft suggest rapid growth in AI use and rising optimism alongside lingering concerns, all while organizations play catch up with regard to defining and instituting internal policies around AI — to ensure AI applications drive learning, do not distract from key learning objectives, and protect privacy.

Where AI is showing instructional value

Formative feedback and practice

generating low-stakes practice items

offering immediate feedback during drafting or problem-solving

Differentiation and scaffolding

adjusting reading levels or examples

supporting learners with language or background knowledge gaps

Instructor workflow support

summarizing student responses

identifying common misconceptions

reducing administrative load so instructors can focus on teaching

In these roles, AI can help extend practices already supported by learning science, such as retrieval, feedback, and targeted support, particularly when time and staffing constraints would otherwise limit consistency.

In terms of instructional implementation and best practice, effective AI integration depends on:

well defined instructional boundaries

transparency about appropriate use

alignment with existing curricular goals and assessment designs

For leaders, designers, and funders, credibility increasingly rests on explaining how AI is used, where its use will be counterproductive and intentionally constrained, and how potential or anticipated risks are to be monitored and mitigated.

AI implementation challenges, concerns, and potential risks

Emerging research highlights risks related to over-automation, erosion of productive struggle, uneven use among learners, software fragmentation, and challenges AI poses in evaluating student understanding and achievement:

Erosion of productive struggle

When AI tools simply supplant student thinking, they can short-circuit the cognitive effort required for learning in many contexts — particularly for novice learners.The “use divide” or application divide (not just the access divide)

Early evidence suggests that some learners use AI as a thinking partner, while others rely on it for task completion. Without guidance, this gap may widen existing inequities rather than reduce them.Opacity and evaluation challenges

AI-generated outputs can obscure how learning is occurring, making it harder for educators to interpret evidence of understanding or diagnose misconceptions.Policy and governance lag

Institutional guidance often trails classroom practice, leaving educators to navigate privacy, integrity, and ethical questions without shared organizational norms.

Education sector surveys and research briefs point to concerns like these among education leaders, highlighting the need to manage uncertainty, risks, and governance protocols by building a wider and well-informed consensus around best practices and ethical standards.

Anticipating what comes next

Rather than treating AI as a category apart, many institutions are beginning to frame AI use through the same lenses that guide effective digital learning more broadly:

AI as augmentation, not substitution

AI tools are most defensible when they support feedback, practice, or sense-making — without removing the need for learners to retrieve, explain, and apply knowledge.Alignment with UDL and learning science

AI features should reduce barriers and support agency, without increasing cognitive load and without replacing core learning processes.Coherence before scale

AI tools should integrate into existing curricular and assessment frameworks, with broader applications needing initial vetting and piloting prior to scaling.Explicit expectations and digital literacy

Learners and educators benefit from clear guidance on appropriate use, limitations, and the purpose of AI within learning tasks.

Innovation as integration, not mere proliferation — a demanding set of expectations for edtech designs

With AI innovation potentially exacerbating the risks of tool bloat or “add-onitis,” educators will no doubt continue to seek solutions that deliver efficient and effective integration — across curriculum delivery, assessment, individualization, and data analysis — while still supporting coherent, socially rich learning experiences.

"Add-onitis" — coined by educational researcher and reformer Michael Fullan (author of Leading in a Culture of Change) — is shorthand for tool bloat: for the tendency of school systems to try to address stubborn learning gaps by adopting new solutions in a rapid, peacemeal fashion, resulting in fragmentation and teacher overload.

Why this matters for leaders, designers, and funders

For decision-makers, vague claims of about “AI-powered” solutions are likely to raise questions rather than confidence. Proposals and products that explain how AI supports evidence-based practices — including clear use guidelines and guardrails— will signal greater innovation and reform credibility and a safer and more reliable approach to helping learners succeed.

VI. Common Implementation Challenges

As the preceding sections suggest, digital learning initiatives rarely unfold under ideal conditions. Many of the most persistent challenges are not isolated problems, but recurring patterns that surface across formats, contexts, and systems.

As pilots and implementation phases roll out, leaders should treat these challenges as opportunities for organizational learning and iterative improvements to both design and practice.

Common constraints to anticipate

Tool sprawl and fragmentation

Multiple platforms and applications adopted without a unifying instructional frameworkEquity gaps beyond access

Differences in how learners use tools, the quality of tasks assigned, and the supports providedOveremphasis on devices rather than instructional design

Investments that prioritize equipping classrooms with hardware without sufficient attention to how digital tools are used instructionallyOverestimated learner independence

Assumptions that students will self-regulate effectively in digital environments without adequate scaffolding, instructional routines, feedback, and explicit instruction in relevant soft skills and normsProfessional learning treated as a one-time event

Initial staff onboarding and training without sustained coaching, collaboration, or opportunities to refine practice as implementation unfoldsImplementation fatigue and change saturation

Competing initiatives and limited capacity that reduce follow-through, even when individual tools or models are well designedSecurity and privacy concerns

Risks associated with data collection, storage, and third-party tools that require clear policies, safeguards, and ongoing oversightEthical risks and unclear norms of use

Challenges related to appropriate use of digital tools and AI — including academic integrity, bias, and transparency — when expectations and competencies are not explicitly definedInadequate planning for sustainability

Insufficient attention to long-term funding, maintenance, staffing, and infrastructure needs once initial grants or pilot phases concludeImbalanced focus on engagement and outcomes

Designs that prioritize visible engagement without evidence of deeper learning or fail to anticipate varied levels of student motivation and engagement

Winning with a strong approach

In anticipation of these constraints, effective systems will plan accordingly —- with robust investments, partnerships, and commitments to ensure that innovation isn’t about betting on a quick fix but about transformative and collectively informed innovation:

prioritizing instructional coherence

designing for learner variability from the outset

embedding professional learning into sustained instructional practice

including, engaging, and supporting organizational stakeholders in shared, change-focused professional learning and deliberation

This realism isn’t only about acknowledging limitations and challenges. Over time, a disciplined holistic approach can, sometimes quickly, reward organizations with results that exceed educators’ hopes and expectations — especially when innovation incorporates sustained iterations in practice, steady investments in educators’ professional development, and active change-focused governance.

🔑 Anticipating key constraints will help education innovators plan accordingly. Over time, a disciplined approach can mean beating the odds — leading to results that exceed educators’ hopes and expectations — especially when innovation incorporates sustained iterations in practice, steady investments in educators’ professional development, and active change-focused governance.

VII. Case Studies: Matching Approaches to Challenges

Since the value of digital learning models becomes clearest when helping solve specific instructional or system-level challenges, case studies can offer unique insights into how digital learning can empower creative designs tailored to localized needs and learner profiles.

The case studies below illustrate — across contexts — how education innovators can creatively and effectively adapt digital and blended approaches to their distinct settings and contexts, all while prioritizing instructional purpose and coherence and supporting positive and engaging learning experiences.

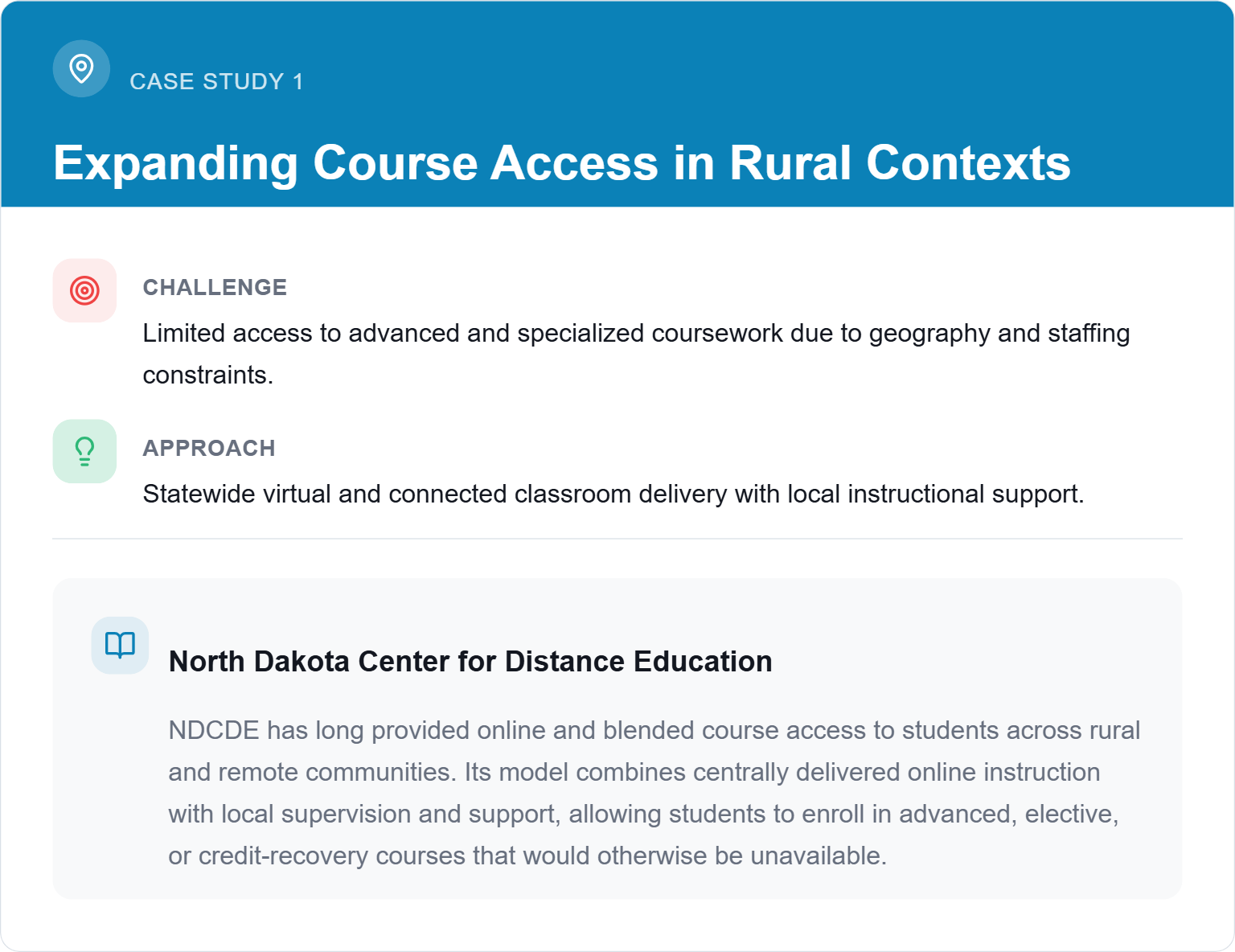

Why it works:

Digital delivery provided wider access to instructional support without displacing local educators.

Clear instructional structures supported consistency across sites.

Maintaining local control, preserved accountability and student support.

This model illustrates how virtual and connected learning can expand opportunity while maintaining coherence and direct forms of personalized instructional support — achieving high standards despite rural education constraints.

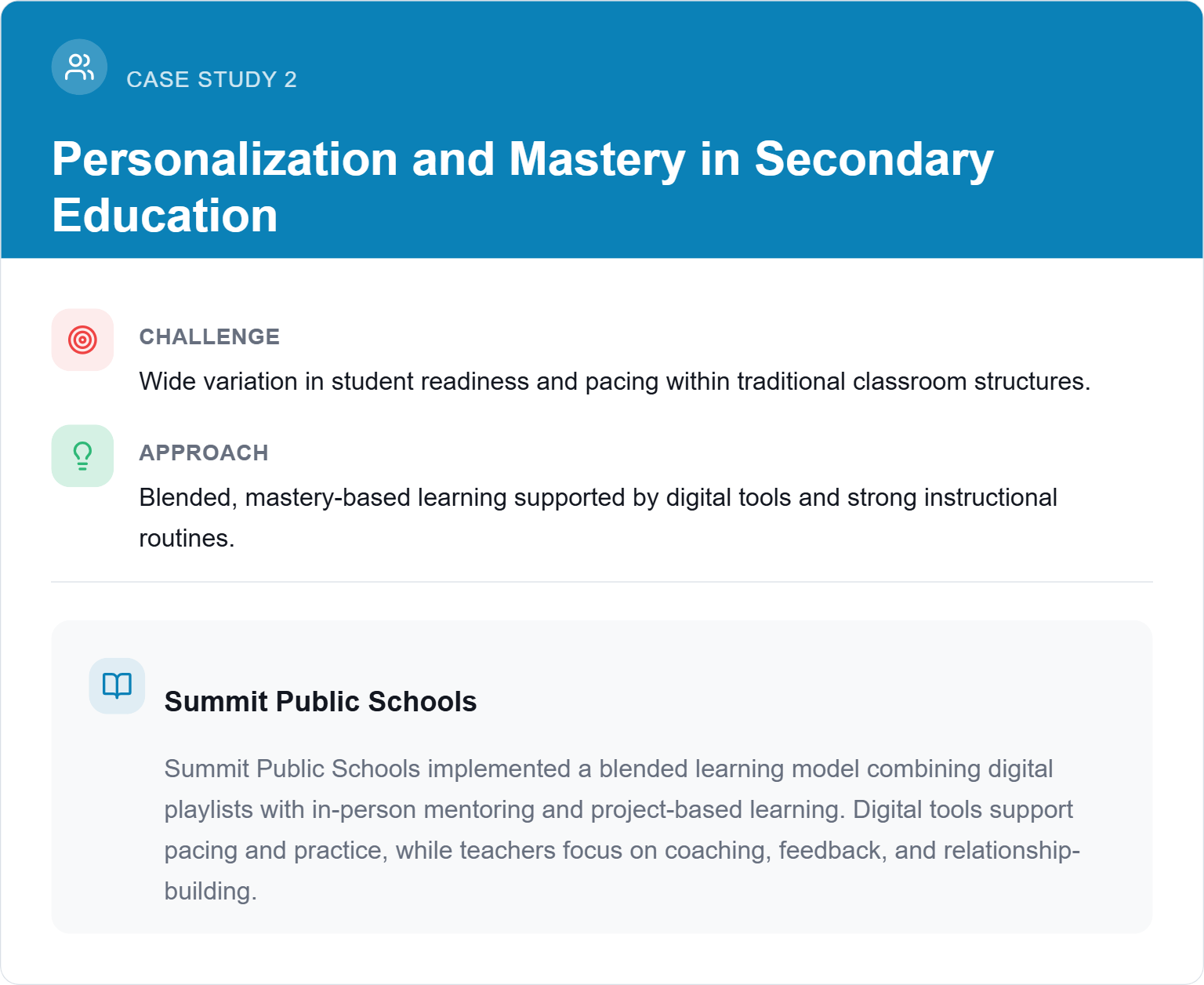

Why it works:

Technology supported but did not replace teacher-led instruction.

Clear learning goals and assessments preserved coherence and academic rigor.

Student agency was paired with explicit expectations and support.

Summit’s experience is frequently referenced in discussions of blended learning because it highlights the role of instructional culture and routines, not just tools, in sustaining personalized learning at scale.

Learn more at: https://bassconnections.duke.edu/about-bass-connections/

Why it works:

Digital tools were integrated into clearly defined instructional goals.

In-person time was redesigned for discussion, application, mentoring, and feedback.

Faculty development and course redesign supported coherence.

These efforts align with broader higher-education research showing that blended, active learning designs improve outcomes when technology is used to align instruction with documented localized learning needs or challenges, the learner experience, and sustained commitments to a coherent theory of practice.

What common features do case studies like these share?

Digital tools were integrated into clearly defined instructional goals, to achieve specific, concrete goals responsive to local interests, constraints, or demographic factors.

In-person time was redesigned for discussion, application, and feedback.

Both faculty development and regular academic course formats were (re)designed to ensure rigor, coherence, and positive learner experiences.

These efforts clearly spotlight the importance of ensuring that digital learning goes well beyond simply equipping sites and students with devices. When digital learning approaches are viewed holistically, informed collectively, targeted to local priorities and contexts, and implemented with a disciplined approach, then digital solutions can be leveraged creatively and effectively to achieve, scale, and sustain meaningful, targeted impacts.

VIII. Implications for Leaders, Designers, and Funders

Digital, virtual, and blended learning are no longer aspirational features of learning design, but are now at the heart of active reforms and innovations, even as new AI disruptions buffet the design, implementation, and policy landscapes.

This means education innovators can’t simply think about novel tools and features out of context. It’s imperative to take a holistic approach that emphasizes the role of educator engagement, training, and expertise.

In addition, digital tools and digitally-reliant methods should be touted as resources that empower educators to imagine and deploy new, more effective instructional designs informed by evidence-backed principles and practices.

As a result, decision-makers need to make a strong case for coherence, readiness, instructional purpose, and sustainability — not just innovation or technical capability as such.

The power of insights

At the outset, we asserted that more nuanced, accurate understandings of digital designs and formats and educators making effective use of edtech tools — vs. the results of isolated efficacy studies — were arguably more crucial and operative pillars of success for tech-supported education innovation. To close, let’s recap how these insights can serve leaders in their respective roles.

🔑 More nuanced, accurate understandings of digital designs and formats along with educators deploying and integrating edtech tools effectively are arguably more central to innovation success than are isolated efficacy studies as such.

For district and institutional leaders

Clarity around digital learning formats supports better decisions, allowing education leaders to benefit from

distinguishing clearly between digital, virtual, and blended models

aligning specific digital formats to key instructional goals and challenges within local contexts

prioritizing coherence and capacity over rapid tool accumulation

In practice, this often means treating digital learning as a core organizational capability — one that requires governance, instructional alignment, sustained faculty learning, and ongoing refinement — rather than allowing it to evolve in silos or as a piecemeal collection of gadgets, programs, software tools, or platforms.

For edtech teams and instructional designers

As markets mature, credibility increasingly depends on instructional clarity.

Strong positioning emphasizes:

how digital features support evidence-based learning practices

how products integrate into coherent instructional systems

where flexibility and agency are intentionally integrated — and where they are deemed counterproductive

how edtech leaders navigate and support effective collaborations with educators for sustained commitments, beyond the sale — including instructional alignment and coaching, and for iterative design solutions

This kind of informed and holistic approach will distinguish serious instructional innovations from surface-level solutions, particularly in procurement and partnership conversations.

For grant writers and funders

Funding narratives are strongest when they connect design choices to universal best practices and those profiled in funders’ requests and objectives.

Effective proposals:

explain how digital learning formats address specific challenges and support targeted student needs

align with research-informed principles such as UDL, coherence, and learning science

demonstrate awareness of implementation constraints and risks, along with clear strategies for ensuring ample adoption capacity and guardrails

outline key metrics and benchmarks for meaningful, targeted impacts

This comprehensive approach conveys insight, realism, and readiness — qualities increasingly valued in competitive funding environments.

IX. Conclusion: Building Coherent Learning Systems

Across digital, virtual, and blended learning contexts, one theme emerges consistently: effective digital learning is less about selecting individual tools or formats and more about building coherent learning systems — systems that anticipate learner variability, align with how learning actually works, and maintain instructional focus as complexity increases.

For education leaders, edtech teams, and mission-driven organizations, this clarity serves multiple purposes.

It supports stronger design and more realistic and robust implementation and readiness planning.

It strengthens communication with stakeholders, partners, and funders.

It provides a more credible foundation for scaling innovation, with the promise of instructional integrity and a laser focus on meaningful and enduring outcomes.

As digital learning continues to evolve rapidly and becomes more widely embedded in educational practice, the most meaningful differentiator will be informed and intentional design. Clear models, research-aligned practices, compelling, localized needs and targets, and coherent, holistic approaches remain the most reliable guides for translating digital learning into durable impact.

About EdPro Communications

With a focus on content marketing and grant writing, EdPro Communications uses education insights to help edtech businesses, district leaders, and mission-driven organizations articulate their value, build credibility, and achieve greater adoption and impact.

Learn more about our services and schedule a discovery call to get started!