The Science of Learning: An Evidence-Based Framework for Reliable Instructional Design

In education and EdTech, good intentions and compelling features aren’t enough. This post explores why cognitive science provides a strong framework for guiding important components of instructional design, and how evidence-based design choices align with how learners actually think, process, and progress. For funders, educators, and product leaders alike, core principles of learning science offer a grounded framework for building learning experiences that highlight expertise and drive meaningful impact for learning outcomes.

All educators, including new teachers, should be able to connect principles from the Science of Learning to their practical implications for the classroom…

— The Science of Learning, Deans for Impact

Our intuitions about learning can sometimes be plain wrong, and it would be a waste to overlook the growing evidence base regarding the effectiveness of various teaching or learning strategies.

— Sean H. K. Kang, “Spaced Repetition Promotes Efficient and Effective Learning: Policy Implications for Instruction,”

Policy Insights from the Behavioral and Brain Sciences (2016)

Despite being very popular and widely used by children, EdTech products often lack research-based insights on how we learn…

— “Applying the Science of Learning to EdTech Evidence Evaluations,”

npj Science of Learning (September 2023)

Introduction

Whether envisioning, designing, or investing in educational products, crafting or assessing curricula, or writing grants for educational programs, you're making implicit claims about how learning works. This makes it essential anchor design in clear and effective design frameworks in order to:

Engage educators with instructional solutions and offerings that signal credibility and real potentional for making meaningful and measurable impacts on learning vs. relying on mere surface-level features

Ensure any claims about learning design can be backed up persuasively, with insights from reliable research

One of the most sure-fire ways to meet these benchmarks is by incorporating credible principles of learning science into learning designs.

The good news is that recent decades have seen significant strides in brain science research. And although many of these findings may seem abstract on their own, when distilled for application in the field of instructional design they offer highly practical lenses and frameworks for improving instructional outcomes:

guiding and diagnosing EdTech designs

helping decision makers evaluate spending proposals

crafting more compelling, research-aligned funding narratives

for discerning between engagement vs. achievement, or between core instructional designs vs. collateral instructional influences (such as localized variables across a spectrum of learners or instructional dynamics).

All of these frameworks and insights will help education innovators design more effective instructional tools, approaches, platforms, and solutions, or judge which ones are more likely to hold up in practice and deliver a more lasting and significant impact on learning.

In other words, learning science equips education leaders to align instructional designs, planning, and investments with insights into the cognitive mechanics of learning — into how learners truly learn and progress.

In this guide you’ll learn:

the essentials of what science-informed design looks like (and doesn’t look like)

the most relevant insights from cognitive research and multimedia design — and how they inform best practices for instructional design

emerging trends in instructional innovation and the promises and risks they present

how learning science insights translate into reliable decision-making frameworks — for crafting reliable instructional design, compelling funding proposals, and more authoritative marketing and communications strategies and narratives — in order to raise credibility, improve competitive positioning, and convey strong commitments to improving education.

I. The Gap Between Innovation and Impact

Walk into any education conference and you'll be overwhelmed by “innovation theater” — flashy interfaces and feature-rich technology; promises of engagement and transformation; tools for saving time… What you won't see so much is evidence that any of it honors how the human brain actually processes, stores, and retrieves information.

In essence, it could be fair to say that the education market is simultaneously obsessed with "research-based" claims and remarkably tolerant of approaches that contradict decades of cognitive science. We know, for instance, that learning styles (visual/auditory/kinesthetic) have been largely debunked by research. Yet products still market to them because it feels intuitive to educators and parents.

The education market is simultaneously obsessed with "research-based" claims and remarkably tolerant of approaches that contradict decades of cognitive science.

We know that cramming doesn't produce durable learning. Yet curriculum pacing guides still prioritize intense coverage over deep understanding, with unit calenders that focus on coverage and quantity while often failing to integrate proven and simple routines prescribed by learning science that would actually make knowledge stick.

We know that engagement doesn’t necessarily imply learning — and yet we often forget to discriminate between behavioral activity (students are busy, clicking, moving) and specific kinds and phases of cognitive activity and where they fit in the larger learning process.

When innovation efforts go off track, focusing on visibility, speed, and attention-getting over cognition, it can be deceptively easy to conflate the visible “engagement” or “activity” that results from a mere multiplicity of media elements, dashboard features, and clickable links with the actual cognitive processing needed for deeper knowledge transfer or subject mastery.

Among the 124 most-downloaded EdTech mobile apps … most of them were judged to stimulate repetitive,

distracting, and meaningless experiences with minimal learning value.

— “Applying the science of learning to EdTech evidence evaluations using the EdTech Evidence Evaluation Routine (EVER),” NPJ Science of Learning (6 September, 2023)

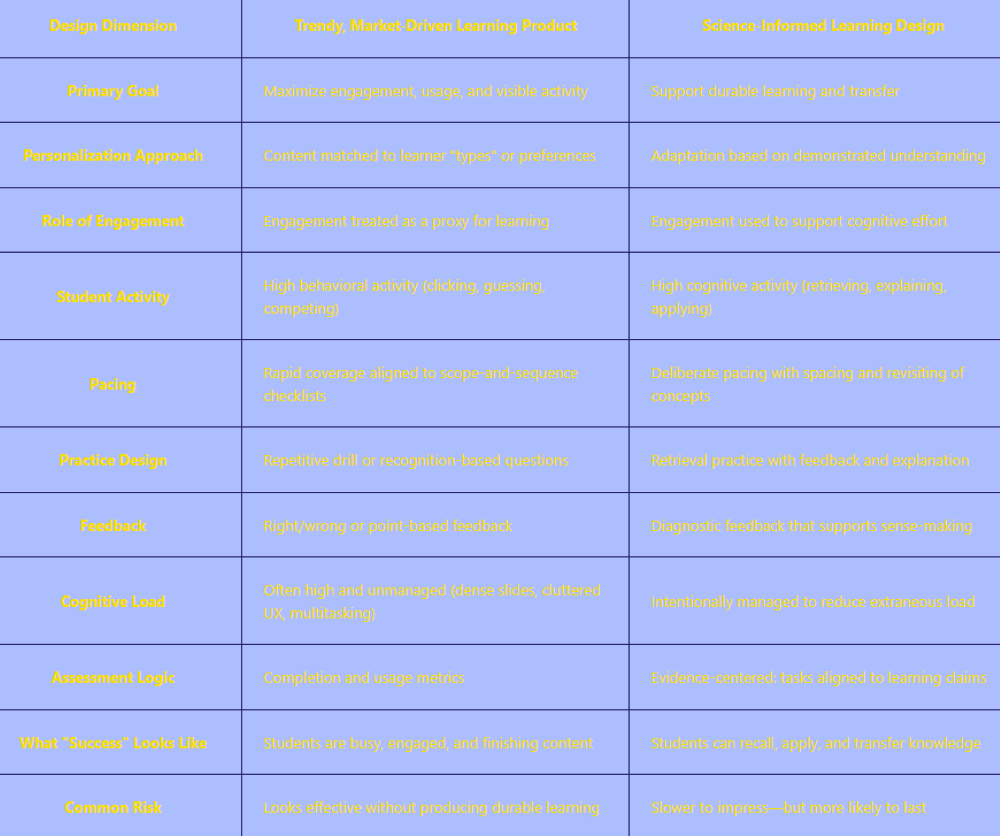

Innovation Theater vs. Science-Informed Learning Design

A side-by-side comparison

Not just another product…

Aligning instructional designs with rocksolid, research-validated principles of learning science means aliging design creativity and innovation with best practices based on the cognitive hardware common across learners and subjet matter.

It’s also a market differentiation opportunity: the ability to articulate how a solution honors cognitive architecture is an opportunity to build trust and thought leadership, and a more reliable foundation for deploying a product that supports strong performance, enduring adoption, and effective scaling.

II. Debunking the Myths That Waste Money

Before we can talk about what works based on research, we need to clear away some popular misconceptions that cost credibility and resources. Despite billions in investments in EdTech across the US K-12 market, the headlines continue to reflect stubborn acheivement gaps. The fact is, adoption is often too hasty — based on the hope of a quick fix. But without effective implementation, integration, and scaling, hopes often turn to disappointments and frustrations that fuel educator burn out and cynicism that hinder new efforts. In fact, it’s not uncommon for purchased tools and licenses to remain unused or underutilized at many sites.

In addition, studies often suggest that the impact of digital learning products on student outcomes varies widely — revealing no simple 1:1 relation between adoption and outcomes.

Initial findings from early adopters in schools are often very positive and based on anecdotal feedback from

students and teachers. As the technology is applied more widely, more extensive research studies that attempt

to quantify the impacts often show limited improvements in student learning, if any.

— “Evaluating the Evidence for Educational Technology,”AITSL (Australian Institute for Teaching and School Leadership Limited (November 2023)

Learning Styles: Conventional Wisdom vs. Real Effects

The idea that students learn best when instruction matches their preferred learning style (visual, auditory, kinesthetic) may seem intuitive and can help make instruction more responsive to individual preferences. However, there’s limited research to support learning styles as a broadly effective design principle. Instead, cognitive research points to learning mechanisms that we all have in common, as opposed to those that are differentiated.

Evidence supports designing for shared features of how students learn — far more than categorizing students by type.— Pashler et al., 2007;Willingham, 2009

This doesn’t mean learning style preferences aren’t real; they’re just not a strong pillar of learning design.

More important than learning styles are universal design principles that reduce barriers and cognitive load for all learners, through practices such as:

spaced reviews with active recall (spaced practice; testing effect)

layering of overlapping concepts and skills (interleaving)

progressing from a solid foundation of acquired knowledge (rote learning) to more active analysis, application, and problem solving (higher order thinking and building more complex theoretical frameworks)

The Engagement Trap

"Our students are so engaged!" is often the first evidence educators cite when defending a new approach. And while engagement matters and the behavior — clicking through an adaptive game, working in groups, creating colorful posters — is alluring, it doesn’t always entail meaningful inquiry and learning.

A high-energy Jeopardy review game might produce great energy in the classroom. But if students are focused on game mechanics, guessing randomly, or just enjoying the competition, minimal learning is happening.

The fix isn't to eliminate engagement of course, but to ensure clarity on what “engagement” looks like — such as activating intrinsic curiosity, motivation, and inquiry as well as an engaging sensory experience while ensuring instruction is scaffolded, guided by explicit learning objectives, and anchored in designs that help students make meaning of the material, connect it to prior knowledge, and organize it into retrievable schemas (semantic processing).

Subject Matter “Coverage” — What Teachers Taught vs. What Students Learned

"We covered it" is a mantra in standards-driven environments. However, like engagement, it can be a relatively hollow metric. In some contexts, educators may go as far as to conflate exposure with mastery, confusing what teachers taught with what students actually learned, and failing to pay sufficient attention to the practices that will help students assimilate skills and concepts and build more connected and holistic subject mastery.

However, despite these potential deceptions, “coverage” can encompass important components of the learning process. In particular, broad subject area familiarity and knowledge give students a crucial foundation for applying critical thinking within a subject.

To think critically about science, or history, or literature, we need a lot of domain-specific knowledge (Penner & Klahr, 1996). For example, one thinking skill in science is recognizing the importance of anomalous results. A surprising result tells you there is something to be learned in the data. But you can’t be surprised by a result if you haven’t made a prediction, and you need domain knowledge to make a prediction.

— Daniel Willingham and David Daniel, “Teaching To What Students Have in Common.”Educational Leadership, vol. 69, February, 2012

In addition to helping students meet benchmarks in a standardized assessment environment, “coverage” and the emphasis on more rote forms of knowledge acquisition (often discounted as “filling the bucket”) — and, yes, sometimes reduced to “cramming” as such — can still contributeto a larger learning process by equipping students for more nuanced and complex subject-matter-specific problem-solving, analysis, and application.

Passive or rote learning isn’t inferior to constructivist learning, rather it provides background knowledge as a foundation for productive forms of higher order thinking.

This makes it important to consider:

how much background knowledge is most needed to enable deeper understanding and mastery (based on the learning context and objectives)

which elements of topical knowledge offer the most strategic and requisite foundation for spiraled progress in the subject area (the enduring concepts) — for making meaningful connections between overlapping or intertwined ideas and concepts

Approaching “coverage” this way offers a balanced perspective — neither falling prey to the fallacy that imparting “encyclopedic” knowledge is a meaningful goal in itself (i.e., over-reliance on a more is always better perspective), nor taking the other extreme, and discounting “passive” learning modalities wholesale.

This means comprehensive coverage and deeper mastery are not at odds with one anotherbut complementary.

Constructivist approaches that downplay memorization, structured practice, and explicit instruction, while well-intentioned, assume that students can construct understanding through exploration and access to tools, rather than by internalizing key content through deliberate effort.

— “Why Knowledge Matters in the Age of AI.” The Science of Learning (30 June, 2025)

What Does This Mean for Instructional Design?

It means that specific stages of learning require broad coverage of new information and core concepts — across a range of contextual learning objectives, such as:

equipping students to engage in more complex subject-specific critical thinking, mastery, and application

preparing students for a comprehensive or competitive subject matter examination

preparing pre-literate children with a foundation in phonics as a stepping stone to early reading

preparing high school chemistry students with the knowledge, concepts, skills (and also tactical study skills) that will enable them to succeed in college chemistry

In most cases, a more is better approach won’t align fully with key learning objectives. However, discounting so-called rote knowledge transfer as such is not an effective approach. A balanced approach entails determing and targeting a scope of “coverage” that will enable students to think effectively and more deeply in the subject matter as they progress — coverage that gives them foundations for the most relevant outcomes for the specific learning context — passing a qualifying exam… deepening subject matter proficiency and understanding… having the mastery they need to advance to the next course or challenge level…

III. The Cognitive Mechanisms That Matter: How Learning Actually Happens

At the intersection of cognitive science and educational psychology, the science of learning offers research-grounded insights into how students learn, based to a significant degree on the brain hardware that shapes the learning process and imposes some near universal constraints.

Working Memory: A Limiting Factor

As the brain's temporary workspace — the blank pages of the learner’s mental scratch pad where conscious processing happens — working memory is a key component of learning hardware. It's where we hold information to “catch” it and get familiar with it.

But as we rush to receive new information, we’re quickly turning the page on what we just took in, in order to “catch” and get familiar with additional new material — meaning what was recently learned is often quickly erased from our memory, or fades in our memory, and we may rush on to gathering new information before we’ve fully understood the recently learned information, concepts, or skills.

This means it’s important to align design with the limits of learning capacity.

In addition to being severely limited in accuracy and duration, working memory is also severely limited in capacity. Historically, researchers estimated its capacity at "seven, plus or minus two" items. Current research suggests it's closer to four chunks of new information.

When you overload working memory — with dense text, complex visuals, unclear instructions, or too many new concepts at once — comprehension stalls. Learners become overwhelmed, give up, or resort to surface-level processing that won't transfer to long-term memory.

These constraints have serious implications for how we learn and what’s needed for learning efficiency, and — because we’re hardwired this way — learning design specialists would be unwise to ignore these factors.

Here are some takeaways in terms of applications for instructional design:

Avoid excess interface noise and complexity – the added “cognitive load” taxes the working memory and limits knowledge acquisition

Break instruction into manageable chunks — introduce no more than three to four genuinely new concepts per lesson

Incorporate cycles of timely review — reintroduce recently introduced concepts and prompt learners to engage in active recall and retrieval

Bring gaps to the fore — provide prompt feedback so learners can improve accuracy of learned concepts or fill knowledge gaps

Long-Term Memory: The Actual Goal of Knowledge Transfer

Learning only happens when information successfully transfers from working memory to long-term memory, where it can be stored indefinitely and retrieved when needed. Unlike working memory, long-term memory is effectively unlimited.

The challenge isn't storage capacity, but getting information in there so it can be retained in a form that's organized, connected, and retrievable.

This is where schemas and and “thinking” come in.

Schemas are organized knowledge structures or patterns that help learners make sense of new information by connecting it to what they already know.

“Thinking” means active mental processing, in the form of active knowledge retrieval and recall, problem-solving, and analysis — providing the level of engagement that’s needed to open doors into long-term memory.

What Does This Mean for Instructional Design?

Learning platforms need clarity around different learning goals, such as presenting new information vs. deepening insights into the meaning of new(er) ideas, facts, and concepts. And, when the goal is building greater command of key concepts and helping learners retain and access this knowledge, practical strategies and designs should focus on:

Emphasizing pivotal and enduring learning objectives

Engaging students in active explanation or problem-solving

Tasking students with organizing information or concepts

Using mnemonics

Providing consistent, spaced review that includes active practice and recall

Layering related target concepts into spaced practice formats

Using low-stakes quizzes to engage active recall and to provide formative feedback (individual feedback for each student and whole-class feedback to inform ongoing instruction)

With this goal in mind, EdTech products can't just deliver content; they need to:

activate prior knowledge

engage learners in assimilating new knowledge

transition learners to higher level thinking that applies skills in concepts in diverse combinations and diverse forms of problem solving and meaning making

facilitate active mental manipulation and review of core concepts, with retrieval and practice over spaced intervals

Cognitive Load Theory: A Powerful Lens for Instructional Design

John Sweller’s Cognitive Load Theory is one of the most influential frameworks in modern instructional design.

It gives education leaders a practical way to analyze mental effort — offering instructional designers a valuable lens for deciding what to simplify, what to sequence, and where to focus learners’ attention.

At its core, the theory distinguishes between three types of cognitive load: intrinsic, extraneous, and germane.

1. Intrinsic Load: The Complexity That’s Fixed

Intrinsic load is the inherent difficulty of the material itself.

Calculus is more complex than basic math.

A critical essay requires more thinking, analysis, and nuance than a summary.

A French speaker will find it easier to master Spanish than Chinese.

This kind of load, intrinsic load, remains independent of design decision, but it’s a factor that should still help guide instructional sequencing, pacing, and formats.

In other words, what designers can control is how complexity is broken down and introduced and learned and mastered more deeply:

through chunking and effective sequencing

integrating spaced review, recall, and rehearsal activities and formats

ensuring learners have the necessary prior knowledge before they move on to new concepts

Design implication:

Intrinsic load is independent of the instructional design, but design choices should help learners manage instrinsic load by helping learners:

build foundations at an appropriate pace

make mental bridges between familiar and unfamiliar concepts

engage in spaced practice, with varied recall and application tasks, to solidify learning and transfer it to long-term memory

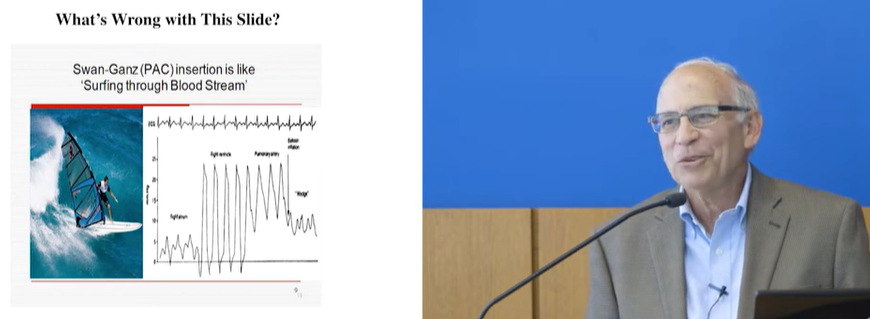

2. Extraneous Load: Minimize or Eliminate

Extraneous load is cognitive effort wasted on poor design. It adds nothing to learning — and can actively interfere with it.

Common sources include:

confusing navigation or unclear task instructions

visual clutter and dense layouts

information split across multiple screens or locations

text separated from the visuals it explains

slides read aloud verbatim

decorative graphics or animations that distract rather than clarify

Many education products introduce this kind of noise in the name of engagement. But attention-grabbing is not the same as attention-supporting. When design elements are competing with the learning task, they drain working memory that learners should be using to build understanding.

Design implication:

Reduce or eliminate peripheral design features that disperse attention and add unnecessary cognitive load or “distraction.”

3. Germane Load and “Desirable Difficulty:” The Effort That Actually Builds Learning

Germane load is the mental effort devoted to making sense of content, such as forming connections, organizing ideas, and building schemas.

This is the load that matters for deeper learning.

So, while it’s important to limit cognitive load on the one hand — to optimize learners’ processing capacity — there are also times to increase cognitive load (what researchers call desirable difficulty), in order to engage learners in more complex and nuanced mental processing efforts — the kind of higher order thinking tasks broken down in Bloom’s Taxonomy — to deepen command of the targeted learning objectives.

Design implication:

From a cognitive science perspective, this means not all difficulty results in cognitive overload — there are areas of desirable difficulty where mental struggle is productive.

Educators and designers should use approaches that isolate key information and concepts, to make them the proper object of focused mental attention and processing. They should “test” instructional designs by asking questions such as:

Where is the learner effort directed (and going)?

Is cognitive load germane (effort focused on deepening the understanding of key learning objectives),or extraneous (competing with the most relevant tasks and efforts)?

Is learning delivery sequenced and paced effectively, to allow for learning within the limits of cognitive capacity and at a productive challenge level?

For educators and EdTech designers, Cognitive Load Theory offers a simple guideline: if digital tools or features don’t help learners think more clearly about the core ideas, there’s a good chance it’s competing with learning rather than supporting it.

IV. The Principles That Predict Success

The insights we’ve shared translate into to a limited set of core learning principles common to virtually all learners (regardless of individual learning preferences), and which consistently predict durable learning — across age groups, subject areas, and settings.

These are not trends or pedagogical preferences. They are among the most well-replicated findings in the science of learning. This means they can play a prominent role in promoting credibility and authority — for EdTech product promotion and adoption, when making appeals for program funding, and in the realm of curriculum planning.

Three Core Principles of Cognitive Science: Retrieval Practice, Spacing, and Interleaving.

Three core principles emerging from the science of learning that education innovators need to know about and apply are:

Retrieval practice

Spacing

Interleaving

Individually, each strategy plays an important role in the learning process.

Using them in combination — and with proper sequencing and integration — will help educators achieve meaningful and durable learning when the designs are implemented in supportive instructional settings.

1. Beyond Review: Retrieval Practice & The “Testing Effect”

One of the more counterintuitive findings that surfaces from learning science is that “reviewing” content is in fact not very effective for learning after all.

What is more effective? Actively retrieving information from memory — sometimes referred to as the “testing effect.”

While formats that facilitate the testing effect may still fall under the rubric of “review” broadly speaking, the testing effect is different from merely rereading or skimming text or or notes.

The “testing effect” results from requiring students to expend additional mental effort, such as active recall, explanation, or application of key information and concepts.

The “testing effect” delivers the learner with timely feedback on learning gaps — insight into what has been retained (or not) from prior learning and into what information and concepts are fully understood and which are still vague or fuzzy.

This phenomenon is not about assessing knowledge for grading. It’s truly about acknowledging the iterative nature of learning and knowledge acquisition, and implies giving students permission to “forget” while on the path to building retention and deeper understanding, because cycles of “forgetting” are a natural part of acquiring new knowledge.

When learners review more superficially — rereading notes or highlighted portions of a textbook — information often feels familiar but is likely to quickly fade from memory. Understanding may also be partial or flawed, without the learner realizing it.

Retrieval practice supports learning in the same way that testing formats tend to: tasking students with pulling information out of memory and applying it with targeted levels of nuance, complexity, and proficiency.

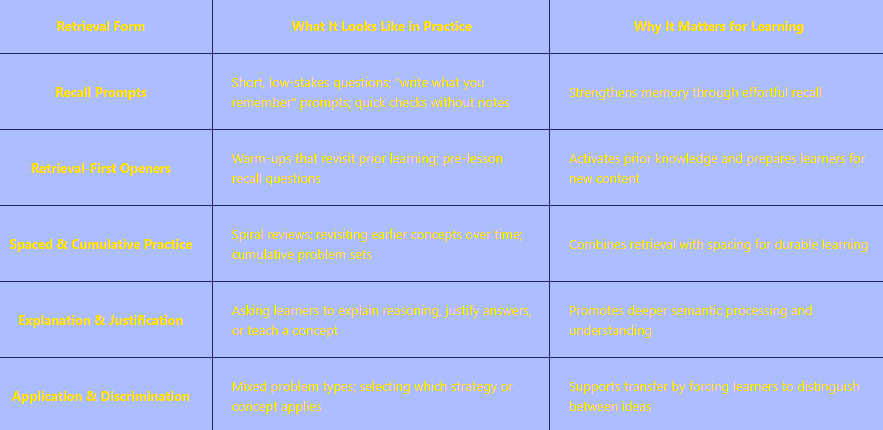

Common Forms of Embedded Retrieval in Learning Design

The cognitive work required by the test effect facilitates deeper knowledge assimilation:

strengthening memory traces

building pathways to longer-term retention

revealing gaps in understanding that foster the review effort that’s most needed (as opposed to “cramming” or “skimming”)

For education innovators, this has clear implications:

Rather than “testing” retrieval only when it’s time for final exams, learning designs should embed this kind of recall or “testing effect” regularly within learning experiences (typically with lowstake formative designs):

to support the transfer of new information and concepts

to create feedback loops — to inform iterative changes to instruction based on learning gaps

to intervene early with adaptive remediation for individual learners, as needed

2. The Spacing Effect: More Than “Teach, Test, and Move On…”

Research shows that learning distributed over time (called “spacing” or “distributed practice”) consistently outperforms massed practice (more intensive cramming).

Spacing works because:

cramming exceeds the learner’s short-term capacity for retention

reintroducing information and concepts over short intervals (and then gradually over longer and longer intervals) leads to far more durable learning

When learners revisit material after time has passed, retrieval requires more effort and the brain has more time (including idle time) to “absorb” material into deeper layers of memory.

Spaced repetition is an evidence-based information encoding technique that improves recall efficiency by

dividing vast subject-matter content into a series of short segments of information learned and revisited or

rehearsed across temporally spaced intervals.

— Xuechen Yuan, “Evidence of the Spacing Effect and Influences on Perceptions of Learning and Science Curricula”

Regardless of mechanism, spacing effects are robust — occurring across various materials, procedures, and

learner characteristics (Dunlosky et al., 2013).

— Justin Skycak, “Cognitive Science of Learning: Spaced Repetition (Distributed Practice)” (18 February, 2024)

In everyday instructional settings, however, it’s not uncommon for curricula and digital learning experiences to be organized around short-term coverage: teach, test, move on…

Clearly, cognitive science suggests an opposite approach.

Effective design means sequencing and formatting learning so that learners “revisit” key concepts over time, including in new contexts, with increasing complexity, and with greater and greater independence and mastery.

A profound consequence of the spacing effect is that the more reviews are completed (with appropriate

spacing), the longer the memory will be retained, and the longer one can wait until the next review is needed.

— Justin Skycak, “Cognitive Science of Learning: Spaced Repetition (Distributed Practice)” (18 February, 2024)

For EdTech teams, this means resisting purely linear progressions and instead using designs that resurface prior learning deliberately, all while new learning is layered into the sequencing.

But, what are the optimal intervals for spaced repetition?…

There’s no established empirical “formula” for determining the optimal frequency of spaced repitition, or the optimal time intervals between each repetition. The best guide available might simply be what educators know and intuit from observations of learners’ progress:

Wait too long before revisiting the information or concept and it may be forgotten altogether, making the subsequent repetition less effective.

If you revisit the concept right away, it’s also less valuable for the longer term retention.

Finding the sweet spot will depend to some degree on the instructional context too, such as how much total cognitive load learners are grappling with across the school day, and the intrinsic cognitive difficulty of the target subject.

Also, in math and many STEM subjects, concepts are more tightly spiraled and interconnected than they are in most liberal arts subjects. This means means revisiting one topic might implicitly entail indirectly reintroducing other prior topics that are building blocks of more advanced content and problem solving abilities.

All this said, research has made one general reliable framework for optimizing spaced practice:

Least Effective = massed practice (i.e. extended cramming)

More Effective = Consistently spaced (evenly distributed) repetition or rehearsal intervals

Most Effective = Gradually extending the time intervals between each rehearsal or repetition

In other words, as learners benefit from revisiting and processing previously introduced information or concepts, they extend the retention period. This means instructors can maintain a similar challenge level and efficiency level even though the length of the intervals regularly increases.

In addition to the lack of any simple formula or approach for modeling and designing the rehearsal formats and the frequency/length of intervals, there are other practical constraints that may help explain why spaced repetition is often not a pillar of instruction design despite how central it is to brain processing:

1. Short-Term Performance Can Be Misleading: Massed practice often produces better immediate test performance and stronger feelings of fluency. This leads students — and sometimes educators as well — to prefer cramming, even though it undermines long-term retention.

2. Misaligned Incentives in Schools, Textbooks, or Other Resources: Curriculum pacing guides, textbook formats and sequencing, exam schedules, and accountability pressures often prioritize short-term coverage and performance over durable learning. These structures make spacing difficult to sustain without systemic support.

3. Learner Metacognition Works Against Spacing: Students frequently misjudge spaced learning as ineffective because spaced material feels less accessible in the moment. The student judges their learning success by how “easy” a task is in the moment, without intuiting longer-term progress as clearly (and not grasping what kinds of learning experiences are driving their longer term progress).

4. Time and Planning Demands on Educators: Implementing spacing requires intentional curriculum design, coordination across units, and sometimes reworking materials that are organized in blocked or chapter-based formats. Many teachers lack the time or institutional support to do this alone.

5. Not All Subjects Require Pure Spacing: In domains like mathematics, some degree of massed or blocked practice can be more effective, particularly for initial procedural fluency. The research supports strategic blending in these curriculum contexts, not the wholesale replacement of massed practice.

The implications for instructional design? Spaced learning is powerful — but it is not a plug-and-play tactic. Its effectiveness depends on thoughtful integration with other practices, such as retrieval, interleaving, scaffolding, and feedback.

Well-designed EdTech tools can make it easier for educators to ensure spaced practice and eliminate common barriers by:

automating spaced review schedules

surfacing prior learning at optimal intervals

supporting “interleaved practice” (see below) without increasing teacher workload

making long-term learning gains more visible to learners and educators

The key design question is not whether spacing works, but how to align it — with real classroom constraints, learner perceptions, and institutional incentives; and how to integrate it effectively — with other core principles of learning science.

Used thoughtfully, spaced learning shifts education away from short-term performance and toward what matters most: durable understanding that lasts beyond the test.

3. Interleaving: Designing for Deeper Understanding and Mastery

In order to build greater versatility and proficiency in a subject area, students need to go from acquiring the building blocks of subject knowledge to deeper and more flexible mastery — understanding how to combine and apply learned knowledge and concepts and discerning underlying principles and organizing schemas.

Knowledge tends to be inflexible when it is first learned. As you continue to work with the knowledge, you gain

expertise; the knowledge is no longer organized around surface forms, but…around deep structure.

— Daniel Willingham, “Ask the Cognitive Scientist: Inflexible Knowledge: The First Step to Expertise,” The American Educator (Winter 2002)

This is where Interleaving comes into play.

Interleaving is a learning design strategy that alternates and/or combines material from among multiple concepts or problem types within practice sessions — increasing cognitive demand in ways that support transfer, schema formation, and flexible application of knowledge.

Because of the relative cognitive demands that come with interleaving, errors are more likely to arise. As they do, however, students benefit from the feedback loop: dispelling misconceptions and/or deepening mental schemas.

While review feels harder and results in more errors, it forces learners to stress-test their recall and understanding — tempering their subject-matter mastery in the way that heat tempers metal:

Which strategy applies here?...

What’s truly different about this problem?… Just the surface features?...

What parallels, associations, differences, and problem-solving factors are more or less relevant for a particular task or question?...

This discrimination is where learning deepens. In fact, research in classroom and lab settings shows that interleaving improves a learner’s ability to apply knowledge flexibly — precisely the outcome that educators are targeting in most instructional contexts.

Interleaving requires merely rearranging some practice problems, without creating new material or otherwise

altering the course… Nonetheless, it has dramatically improved mathematics learning in classroom-based

randomized control trials — the gold standard of evidence.

— Marissa K. Hartwig and Doug Rohrer, “Interleaved Practice Improves Mathematics Learning.” Researching Education (2 April, 2021)

What does this mean for instructional design?

For designers, this means prioritizing germane cognitive demand over smoothness and surface retention. In this regard, interleaving challenges a common design instinct, which is to make learning feel seamless and error-free.

For education innovators, its value lies in shifting practice from comfort and fluency toward greater cognitive discrimination and discernment to helps learners achieve real mastery and transfer.

For curriculum design, it means introducing learning sequences that move beyond tidy topic silos designed to reduce noise and cognitive demand (ideal for certain phases of learning) and adopting more reflective and more complex recognition and problem-solving tasks.

These tasks center on the targeted and enduring subject area skills — not only for improving end-of-year readouts on summative exams, but as a key component of the learning journey, for cementing and deepening learning and helping students experience greater self-efficacy.

In design practice, interleaving requires intentional sequencing and restraint:

knowing when to move beyond blocked practice

knowing when to mix concepts

knowing when to let learners struggle productively

EdTech tools and curricula that support this shift are better positioned to build durable understanding that goes beyond short-term performance gains.

Combining the Big Three for the Biggest Impact

The strongest effects emerge when instructional designs leverage and integrate all three principles.

Spaced practice needs to incorporate retrieval tasks and concept interleaving in order to improve the odds that learners will achieve deeper understanding and more durable retention.

Strong designs are those that integrate all three principles effectively: spaced practice, the testing effect, and interleaving.

Blended effectively, these core concepts support the key brain processes that drive learning for almost all learners — based on our brain hardware — making them benchmark components of best practice for instructional design and reliability.

V. Multimedia Learning: Evidence-Based Rules for Digital Design

Cognitive scientist Richard Mayer spent decades studying how people learn from words and pictures. His research produced practical, evidence-based principles for designing videos, slide decks, e-learning modules, and other multimedia instructional materials.

Below are some of Mayer’s key concepts distilled into practical, evidence-based design principles:

1. The Coherence Principle: Strip Out “Seductive Details”

“Seductive details” are elements such as background music, decorative graphics, and tangential anecdotes. They feel engaging, but they increase extraneous cognitive load and distract attention from the learning goal.

Mayer’s research consistently shows that adding interesting but irrelevant information reduces learning.

If a graphics or animations (just like the one’s used in the left column here!) don’t directly support the instructional purpose, it’s competing for limited cognitive resources.

Eliminating esthetic and attention-getting bells and whistles is often hard for design teams — or counterintuitive — especially when there’s pressure to make learning materials “fun” for the learner and “appealing” or “seductive” when making pitches to prospects. In the end, however, the balance isn’t truly between boring and engaging, but about prioritizing purposeful design.

2. The Contiguity Principle: Keep Related Elements Together

When learners must split attention between separate sources of information, working memory gets used for searching and holding content rather than learning it.

For EdTech interfaces, this means tightly integrated visuals and text:

Place text labels next to the parts of a graphic they describe

Put explanations adjacent to visuals — not on another slide, screen, or legend

For presentations — avoid slides with bullet points on one side and graphics on the other.

For video — use annotations only when and where they’re precisely relevant

3. The Modality Principle: Pair Graphics with Spoken Narration

Research also shows that graphics paired with spoken narration are more effective than graphics paired with on-screen text.

Presenting both text and visuals simultaneously overloads the same processing channel — learners must choose between, for example, reading the text vs. inspecting and decoding the diagram. Narration distributes the load: eyes can focus fully on the graphic, while ears process the explanation.

Cognitive research reveals that presenting text and visuals simultaneously overloads the same processing channel — this issue isn’t about learning styles but about cognitive capacity and brain processing.

This is an example of where the “learning style” arguments falls short. From a design standpoint, putting a crucial diagram side-by-side with a list of bullet points may seem as natural as ever, but brain research suggests it’s not highly effective.

Why This Matters for EdTech Marketing.

Instructional tools — and in many cases your demos, explainer videos, and website — should leverage and model good multimedia design. When buyers experience clarity and cognitive ease, it shows that design teams understand how learning actually works.

VI. A Note on the Affective and Psycho-Social Dimensions of Learning

Cognitive principles provide a stable, well-replicated foundation for instructional design. At the same time, it’s important to not lose sight of the ways that social, emotional, and environmental factors also contribute to or impact learning.

For this reason, researchers often describe learning influences across multiple domains.

Alongside the cognitive domain, two other commonly cited domains are:

The affective domain — motivation, values, attitudes, and self-belief

The psychomotor domain — relative proficiencies in physical and motor skills, from basic actions to complex performance (e.g., note taking, manipulating digital software, typing or keyboard skills…)

These domains are not alternatives to cognition; they interact with cognition.

Case in point, the findings of education researcher John Hattie.

Hattie has attempted to create a taxonomy of classroom inputs influencing learning. Many of these diverse instructional factors and conditions will seem peripheral or subjective compared to core learning principles uncovered by cognitive research. According to Hattie’s research, however, these inputs — even if accidentals in some respects — can still have an outsized influence on learning.

For example, Hattie asserts that some highly interpersonal, affective, and environmental factors can have greater impact on learning outcomes than some of the core principles of learning science. Examples include:

teacher expectations

teacher credibility

students’ feelings of self-efficacy

Another researcher has also noted that a learner’s motivation for learning can influence the transfer of learning to long-term retention, and that spaced practice, in addition to strengthening learning through cognitive mechanisms can support feelings of self-efficacy that foster instrinsic motivation (Xuechen Yuan, “Evidence of the Spacing Effect and Influences on Perceptions of Learning and Science Curricula”).

Why This Matters for Learning Design

Affective factors such as motivation, self-efficacy, and emotional safety can directly influence how much cognitive effort learners are willing or able to invest.

A student’s motivation to master a subject may be shaped by peer norms, a trusted teacher, or personal values, but it can also be strengthened or weakened by instructional design choices.

Similarly, psychomotor demands can become hidden barriers. In digital learning environments, for example, students who struggle with navigation, input tools, or interface complexity have less cognitive resources for the targeted learning, potentially triggering frustration or reduced confidence, likely to further impede engagement.

The key takeaways for educators and EdTech designers are:

The ultimate effectiveness of reliable learning science principles will in some measure depend on the conditions in which they operate.

EdTech products should provide learners the scaffolding they need, but avoid imposing scaffolding where and when learners don’t need it.

EdTech innovators will be equipped to convey greater credibility and provide stronger partnerships with educators if they understand the larger implementation dynamics (affective and environmental) that can influence the performance of science-based instructional tools and design elements.

Even well-designed, research-aligned learning experiences can be be impacted by factors such as:

poor usability

misaligned incentives

low learner confidence

environments or influences that undermine attention or motivation

Conversely, when affective and psycho-social factors are thoughtfully supported, they can reinforce cognitive learning principles, rather than compete with them.

For education leaders and EdTech innovators the challenge is balance: applying research-informed approaches consistently and holistically and anchoring design in cognitive science while still remaining attentive to emotional, social, and environmental conditions — all for the complementary goals of optimizing learning outcomes and enhancing student wellbeing.

One pedagogical principle that can directly support learning across all three domains (cognitive, affective, and psychomotor) is scaffolding — structured, temporary supports that help learners accomplish tasks they could not yet complete independently, with those supports fading over time.

Scaffolding provides an adaptive approach to aligning instructional designs around key multi-dimensional aspects of learning. Below are two research-anchored theories of learning that highlight the need for nuanced scaffolding:

1. Zone of Proximal Development (Vygotsky)

Lev Vygotsky was a pioneering education theorist who emphasized the fundamentally social nature of learning. His concept of the Zone of Proximal Development (ZPD) describes the space between what a learner can do independently and what they can achieve with guidance from a more knowledgeable other. Learning is most powerful when instruction is intentionally designed to meet learners just beyond their current level, with support that gradually fades as competence grows.

For design, this means:

Support learners exactly as much as they need, not more

Double down on support when introducing new concepts or skills, especially more unfamiliar material with greater intrinsic load, and fade support in order to help students self-assess their progress and retain knowledge (e.g., "I do, we do, you do" or other modeling approaches)

Scaffolding doesn’t only have an impact on cognitive load, it also can have a dynamic impact on affective factors such as students’ experiences of self-efficacy and their perceptions of teacher efficacy — both of which can also impact learning according to Hattie.

2. The Expertise Reversal Effect

As learners gain knowledge and skill, instructional supports that once helped these learners can begin to hinder their receptivity to learning.

The same step-by-step explanations, “chunking,” worked examples, and highly guided tutorials that reduce cognitive load for novices — are likely to “bore” and distract more advanced learners — creating an affective barrier to learning while also introducing extraneous load that hinders processing.

Key implication: As the learners’ expertise grows, instruction should shift as well — toward problem-solving, autonomy, and opportunities to apply and extend knowledge rather than re-explain it.

What helps novices can hinder experts

Step-by-step tutorials become extraneous load for advanced learners

Effective EdTech products should be adaptive to learners’ evolving needs, adding or reducing scaffolds to align with learners’ readiness and the intrinsic load of the subject matter at hand, rather than applying design concepts simplistically.

VII. From Principles to Design Decisions: Practical Frameworks That Work

Understanding cognitive principles is crucial, but the real work involves translating the principles into concrete designs, complicated by inevitable constraints.

Common complexities that challenge designers include:

limited time vs. expected learning outcomes

prerequisite knowledge gaps, and/or gaps that vary among learners

learners with diverse challenges and aptitudes

measurement challenges

integrating real adaptability

unrealistic stakeholder expectations

This is where frameworks become valuable — not as rigid templates, but as structured frameworks for mapping, informing, and streamlining design decision making.

1. Backward Design: Start With the End

Grant Wiggins and Jay McTighe's backward design framework has become ubiquitous in education and remains an enduring design principle for good reason: It forces designers to think about learning outcomes before they think about designing instructional sequences and formats. The goal? To ensure that how instruction unfolds within a given context aligns as optimally as possible with what learning outcomes matter most.

The sequence is simple…

Define learning outcomes first. What do you want learners to know and be able to do at the end of each of the full learning cycle?

Design the learning sequences and experiences that will equip students to acquire the knowledge and skills they’ll need in order to produce the planned work product with a high level of proficiency.

Design the learning sequences and experiences that will equip students to acquire the knowledge and skills they’ll need in order to produce the planned work product with a high level of proficiency.

In essence, Backward Design is a nearly timeless methodic and transparent approach to ensuring strong alignment between learning assessments and the instruction that leads up to them. Because the end-of-instruction assessment will guide the design of the instructional path, it’s also important that the assessment itself is structured to provide a valid measurement of the targeted knowledge and skills (see evidence-centered design below).

The backwards planning approach helps instructors and designers create learning experiences that maintain a clear and consistent focus on the targeted knowledge and skills and coherent sequencing.

This sounds obvious, but most instructional design simply doesn’t get this kind of methodic approach. As a result, the learning outcomes, the instructional approaches and formats, and the learner experience are often less than optimal.

Backward design ensures that every component of your curriculum or product serves a clear learning goal, and that you also have well-designed formats for measuring student proficiency — both diagnostically (formative assessments) and at the later stages of the learning cycle (summative assessments).

For grant writers, backward design also maps perfectly onto logic models. Proposed inputs and activities (the learning experiences) align directly with outputs (the evidence of learning) and the targeted outcomes and goals (the ultimate impact). The combination of logical flow and valid metrics adds credibility and clarity to the proposal narrative.

2. Evidence-Centered Design: Make Your Evidence of Learning Explicit and Valid

Evidence-Centered Design (ECD) takes backward design deeper. It asks: What counts as evidence of learning, and why?

This matters because not all assessments are created equal. A multiple-choice test might show that students can recognize correct answers when they see them. But if your learning goal is for students to apply a concept to novel situations, recognition isn't adequate evidence. You need performance tasks that mirror real-world applications.

ECD forces you to make the chain of reasoning explicit:

What knowledge or skill are we claiming the learner has?

What observable behaviors would demonstrate that knowledge or skill?

What task would elicit those behaviors?

How do we interpret the evidence from that task?

This level of rigor is increasingly expected — in EdTech product development, competency-based education programs, and sophisticated grant applications.

When you can align with school and district mandates (their agreed upon curriculum standards) and articulate your evidence arguments clearly, it signals a strong grasp of stakeholder needs and effective pedagogy — you're not just measuring something, you're measuring what matters most to prospective stakeholders and for specific learning contexts.

3. Universal Design: Streamlining Learning Effort While Building Agency

Universal Design for Learning (UDL) is often misunderstood as a checklist: provide multiple means of representation, multiple means of action and expression, multiple means of engagement… But UDL has shifted, making it more about reducing barriers and increasing agency, not just adding options.

The 2024 updates to the UDL Guidelines (Version 3.0), released by CAST, represent the most significant revision of Universal Design for Learning in over a decade. Rather than treating UDL as a menu of instructional options, Version 3.0 reframes UDL as a barrier-reduction and agency-building framework, explicitly centering learner variability, identity, and context.

CAST & UDL 3.0 (2024)

CAST stands for the Center for Applied Special Technology, a non-profit education research organization that developed the UDL framework, providing guidelines for flexible learning environments that remove barriers for all learners.

Key shifts include a stronger emphasis on:

learner agency and identity

belonging, collaboration, and social learning

designing systems to anticipate learner variability and reduce barriers to participation

This approach works in two stages:

1. Reduce unnecessary load and barriers.

Reduce or eliminate obstacles that make learning harder for everyone, but especially for learners with learning or language barriers or limited prior knowledge—through clear instructions, consistent navigation, and accessible interfaces—ensuring as much unhindered access to, and participation in, learning resources as possible.

2. Provide features or options that genuinely increase agency and potentially motivation.

Offer learners multiple ways to demonstrate understanding

Integrate adaptive options for flexible pacing and varying levels of scaffolding

Provide choices in how learners explore content

These design elements can help learners adapt instruction to their needs and contexts while instructional formats remain aligned with evidence-backed learning principles.

The goal is meaningful options that serve learning, while avoiding choice overload..

How does this matter for education innovators?

It means facilitating ease of use and adpatability — across a wide spectrum of learners — are crucial features of best practice alongside other core components of reliable design.

In addition, designing for variability often supports outcomes for all learners. When you reduce barriers to participation and increase opportunities for student agency you streamline the learning path and honor learners’ differences, boosting the odds that all students will be more engaged and experience deeper connections to the learning process and learning environment.

VIII. Emerging Trends in Learning Science-Infused Innovation (2025–2026)

As the educational technology landscape is rapidly evolving, a handful of prominent trends are meaningfully reshaping how learning is designed, delivered, and evaluated.

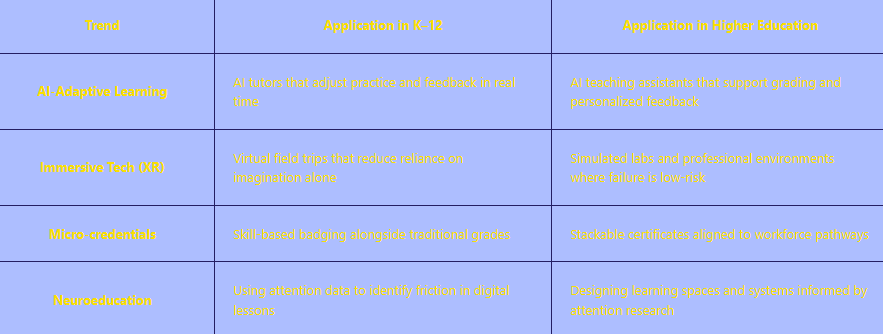

Below is a high-level view of the current trend landscape, followed by three developments that merit closer attention because of the opportunities they present for education innovators.

Emerging Trends at a Glance

Three Trends Leading the Way…

Trend 1: Generative AI: Changing Workflows Faster Than We Can Validate Quality

Generative AI is already being used or piloted for many kinds of tasks: drafting content, prototyping lessons, generating practice items at scale, and personalizing feedback for learners. In many cases, however, AI is automating or scaling efforts that were never informed by valid instructional designs to begin with. So while opportunities emerge, there are also risks, that include:

AI generating content production faster than teams can validate learning quality

Lack of insight into instructional principles — e.g., whether materials are appropriately scaffolded, cognitively demanding, accessible, free from bias…

Putting convenience and time-savings before quality and impact — producing content more quickly or at scale all while undermining core learning principles

But…

As AI-generated educational content floods the market, products that combine automation with human judgment grounded in learning science will stand out to discerning buyers, with implications for market positioning.

For example, instead of emphasizing that “AI-powered” is inherently better, innovators should highlight how products and programs are “learning science validated and AI enhanced.”

This helps signal a quality-assurance process that’s evidence based and pedagogically sound.

Trend 2: Learning Engineering: A Rigorous Design Framework

Learning engineering is emerging as a unifying discipline that integrates learning science + human-centered design + data-informed iteration.

The focus is simple but powerful: building learning systems that improve over time by integrating cognitive principles, affective factors, and data-driven feedback loops to inform simultaneous improvement and scaling.

Core elements include:

grounding design decisions in learning science

understanding learner needs through human-centered research

embedding measurement from the start

iterating based on evidence of impact

Learning engineering has implications for boosting credibility:

For EdTech teams — by providing a rigorous development framework

For districts and institutions — as a signal that a vendor designs for outcomes, not just surface features

In grant proposals — aligning narratives with up-to-date evidence-based standards in the domain of teaching and learning

Trend 3: Educational Neuroscience: Useful Frameworks, Easy To Overclaim

Neuroscience has enriched our understanding of memory, attention, and skill development, but educators can also be seduced by neuromyth marketing: claims that sound scientific without being instructionally meaningful.

References to brain activity are only valuable when they clarify actionable design decisions and impact engagement and learning.

For example, knowing that memory consolidation occurs during sleep is interesting. However, the implication for instructional design that matters is the insight into why spacing learning across days improves retention. Neuroscience can help explain why strategies like retrieval and spacing work, but products and marketing will be more credible when grounded in behavioral evidence — evidence of what learners can actually recall, apply, and transfer over time. Broad and overly abstract references to “neuroscience” and “learning science” as such can eventually erode trust, especially with experienced decision-makers.

Why These Trends Matter Together

Across all three trends, the pattern is consistent: technology is moving faster than our ability to evaluate learning quality.

Education leaders who ground innovation in learning science — while remaining clear-eyed about implementation challenges and risks and avoiding hollow claims and promises — will be better positioned to build trust, demonstrate impact, and avoid the cycle of hype and disappointment that too often breeds skepticism, even cynicism, among front-line educations.

IX. Real-World Applications: Putting Learning Science To Work

The design principles surfaced from cognitive science provide a checklist of best practices, but that’s only the beginning. They are most powerful when used as a rigorous framework for design decisions and as a diagnostic lens for quality assurance:

to ensure strong alignment between goals and design

to discern between mere engagement vs. genuine cognition and to support both deeper engagement and more durable learning

to guard against mistaking exposure for mastery

When design is grounded in relevant learning science it provides cohesion that’s aligned with real learning processes and a range of variables — across learners and instructional settings — helping inform nuanced but critical design refinements — across products, curricula, and programs.

The specifics vary by context, but the underlying principles provide frameworks that go a long way in taking assumptions, bias, and guesswork out of the design equation, and for building in features that boost quality and credibility.

This solid approach also creates a strong foundation for:

improved market positioning

raising credibility

building thought leadership

For EdTech Product Teams

Well-designed learning tools make learning modalities visible and intentional and keep them strongly aligned with the foundational learning processes shared by virtually all learners.

At a minimum, this means managing cognitive load deliberately:

interfaces are clean and navigable

instructions are clear

learners are never asked to hold information in working memory while searching for related content or decoding unnecessary visual complexity

Strong products also embed retrieval throughout the experience, rather than reserving it for end-of-unit assessments:

learners are prompted to recall prior concepts before moving forward

learners revisit earlier ideas after time has passed — rather than just “moving on” to the next new concept

instructional formats prompt learners to apply knowledge in mixed contexts

Knowledge acquisition and skill development reflect fluid, iterative processes, not just “coverage and completion:”

difficulty increases thoughtfully

prior knowledge is activated and revisited

core concepts reappear across time rather than disappearing once a module is “finished”

Features that support a spectrum of learner differences are (more) fully integrated:

scaffolding and challenge levels activate prior knowledge and target students’ proximal zone of development

when relevant and feasible, designs allow for adaptive support and pacing

the larger learning context promotes a positive affective and social learning environment: highlighting explicit and relevant learning goals and benefits, creating avenues for students to deepen intrinsic motivation and curiosity, and striving to maintain a focused, rewarding, and safe learning experience for all students

When these signals are present, products feel purposeful rather than flashy — impressing and earning trust with experienced educators who really know what effective instruction looks like.

For Curriculum Developers

Curriculum aligned with learning science prioritizes durability over coverage.

A strong approach starts with backwards design, adaptations for variations in learners’ prior knowledge, and weighing subject matter difficulty (intrinsic load):

limiting the number of genuinely new concepts introduced at once

making connections to prior knowledge explicit rather than assumed

structuring and sequencing curriculum to target well-defined learning outcomes and to allow for processing, retrieval, and consolidation — beyond just exposure

At the program and system level, curricula informed by learning science and the affective/psycho-social dimensions of learning push against rigid topic silos.

Instead of organizing instruction as a sequence of isolated units, effective instructional designs revisit key ideas over time, in new contexts, and with increasing demands — discerning when to support learners with more rote forms of instruction and learning and when to add germane cognitive load.

practice is mixed

concepts are interleaved

deeper mastery of the most enduring knowledge and skills for ongoing learning — not short-term performance — drive targets and design

foundational learning serves as a platform for crosscurricular approaches that reflect real-world problem-solving, increasing relevance and authenticity

increasing mastery opens doors to deeper analysis, discovery, application, and synthesis — through designs that invite learners to engage in more open-ended inquiry and inflect the learning process with individual voice, creativity, and agency

For Grant Writers and Thought Leaders

Learning science strengthens proposals and public narratives by strengthening the logical links between proposed practice and anticipated impacts and grounding the claims in explicit evidence-based insights.

Rather than asserting that a program is “research-based” or “personalized,” strong proposals explain how specific design choices align with known cognitive mechanisms. Retrieval is named; spacing is described; constraints such as time, learner background, and instructional setting are acknowledged and addressed…

Equally important is the work of articulating in plain English how credible designs support goals and align with targets and objectives. Sophisticated reviewers know that research does not prescribe solutions or magically ensure predictable outcomes; it guides design decisions and reflects best practices.

What’s most compelling are clear insights into how specific principles were adapted to a specific instructional context or challenge while also aligning with concrete and measurable learning goals — signaling expertise and credibility far more effectively than broad claims.

This same clarity strengthens thought leadership. When leaders can explain not just what works, but why it works — and when it might not — they move beyond “pitching.” By offering nuanced insights, leaders highlight expertise, a more purpose-driven vision and commitment, and greater credibility and authority.

X. Conclusion: Learning Science and Innovation Credibility

We began this guide with a simple observation: education innovations frequently fail — not because of weak intentions or insufficient technology, but because they ignore how learners actually learn.

Across this post, we’ve examined the core constraints and opportunities that shape all learning — working memory limits, long-term retention, cognitive load, retrieval, spacing, interleaving, and the role of prior knowledge.

Far from passing trends, these principles are some of the most stable findings education research has produced.

As abstract principles alone they don’t offer any guarantee of success, but they do provide education innovators with a reliable foundation for judgment — a way to identify when designs are likely to promote enduring learning or align effectively with the desired learning objectives, as opposed to merely looking impressive in the moment. This means that in a crowded education market learning science literacy can serve as a meaningful differentiator.

for product teams, it sharpens design decisions and builds trust with discerning buyers

for educators and institutions, it provides a framework for evaluating what’s worth adopting and what isn’t

for grant seekers and thought leaders, it has exceptional value for building more credible and defensible narratives that align with prevailing research and guidance

When education innovators explain how their work supports durable learning and translates evidence into practice, they signal a rare level of credibility in a field shaped by cycles of easy promises. Applications of learning science combined with a keen commitment to address sticky learning challenges and inequities will convey a seriousness of purpose and a readiness to partner effectively with educators with a focus on student success and wellbeing.

About EdPro Communications

With a focus on content marketing and grant writing, EdPro Communications uses education insights to help edtech businesses, district leaders, and mission-driven organizations articulate their value, build credibility, and achieve greater adoption and impact.

Learn more about our services and schedule a discovery call to get started!